layout(t(1:3))

V <- function(X,a) -a * X + X^3

curve(V(x, a = -2), -3, 3, bty = 'n')

curve(V(x, a = 0), -3, 3, bty = 'n')

curve(V(x, a = 2), -3, 3, bty = 'n')3 Transitions in complex systems

3.1 Introduction

My dissertation research was on Jean Piaget’s stage theory of cognitive development. These stages were separated by transitions. One such transition should occur between the pre-operational and concrete-operational stages. In the concrete-operational stage, children learn logical, concrete physical rules about objects, such as weight, height, and volume. The most famous test to distinguish between the two stages is the conservation task.

There are many conservation tasks, but the setup is always the same. For example, you show a child two equal balls of clay, ask for confirmation that they weigh the same, roll one into a sausage shape, and then ask again for confirmation of equal weight. A nonconserving child will now claim that the longer sausage weighs more. One can also do this with two rows of coins (spreading one row out) or two glasses of water (pouring the water from one glass into a smaller longer glass). It is actually a fascinating task to do with children between five and eight years old.

From the 1960s to the 1980s, this was a topic of major interest in developmental psychology. A key question was whether there really was a stage transition, and there was a lot of confusion about what a transition actually was. It was my task to clarify this and to prove Piaget’s hypothesis. I think I succeeded in clarifying the question, but whether I succeeded in proving the stage theory is debatable.1

My PhD advisor Peter Molenaar had the idea to use catastrophe theory to define the concept of a transition in a precise way, to use the so-called catastrophe flags to test the hypothesis of a transition, and also to fit a cusp model to the conservation data. It took me, with the help of many people, more than 20 years to do all these steps (van der Maas and Molenaar 1992; Jansen and van der Maas 2001; Dolan and van der Maas 1998; Grasman, van der Maas, and Wagenmakers 2009).

What is catastrophe theory, what are these flags, and what is the cusp? These are the first questions I will answer in this chapter. But this chapter is also about statistics. You will learn how to fit a cusp model to data. I will present a methodology for studying transitions in areas where we do not have a mathematical description of the underlying system. I will present examples from very different subfields of psychology. Finally, I will discuss the criticisms that were made in response to the hype around catastrophe theory about fifty years ago. This is a long chapter, but I have tried to include only what is necessary in order to make intelligent use of this approach. This requires some basic understanding of the mathematics of catastrophe theory, a good overview of the possibilities for testing cusp models, and knowledge of the controversies from the early days of the popularization of this theory.

3.2 Examples of transitions

In Chapter 1, Section 1.5, I stated that complex systems tend to be characterized by a limited number of equilibria. As we saw in the Chapter 2, these equilibria can take many different forms, but in this chapter, we consider only stable and unstable fixed points. We are particularly interested in the case where the configuration of stable and unstable points changes due to a smooth change in some external variable, a control variable. In such a case a discontinuous change or a (first-order) phase transition can occur. A transition or tipping point is an intriguing property of complex systems.

I call it an intriguing property because in the linear systems we are used to, smooth changes in control variables lead to similar (proportional) changes in behavior variables. We may see a big change in some behavior, but this requires a big change in the controls. An example would be the speed of your bike and the force you apply. But in the case of fear or panic, this process is often nonlinear.

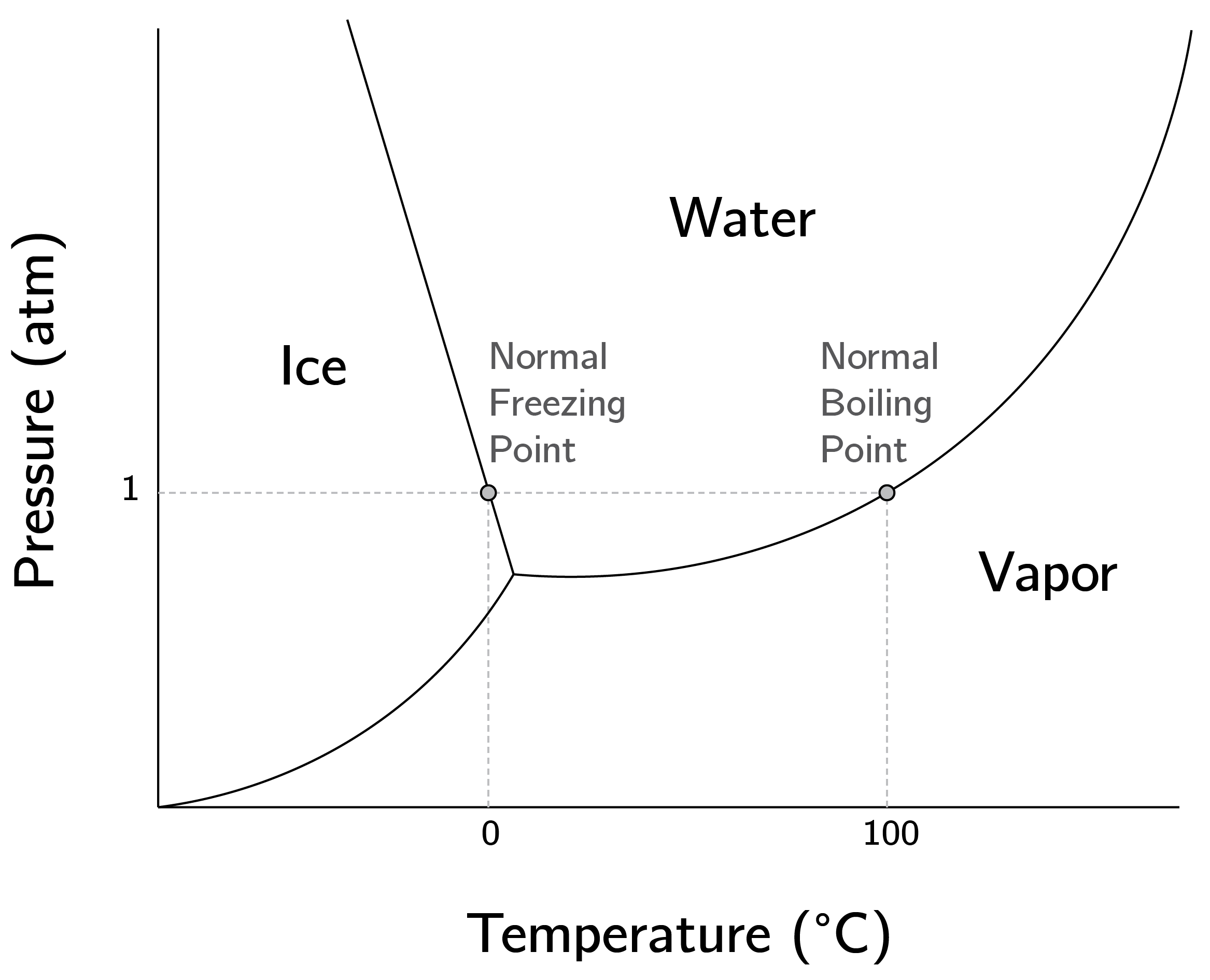

A key physical example is the change in state of water. Between, say, 10 and 80 degrees Celsius, a smooth change in temperature results in only a slight change in the liquid state of water. But if we change the temperature very slowly, close to the thresholds of 0 or 100 degrees Celsius, we see sudden phase transitions.

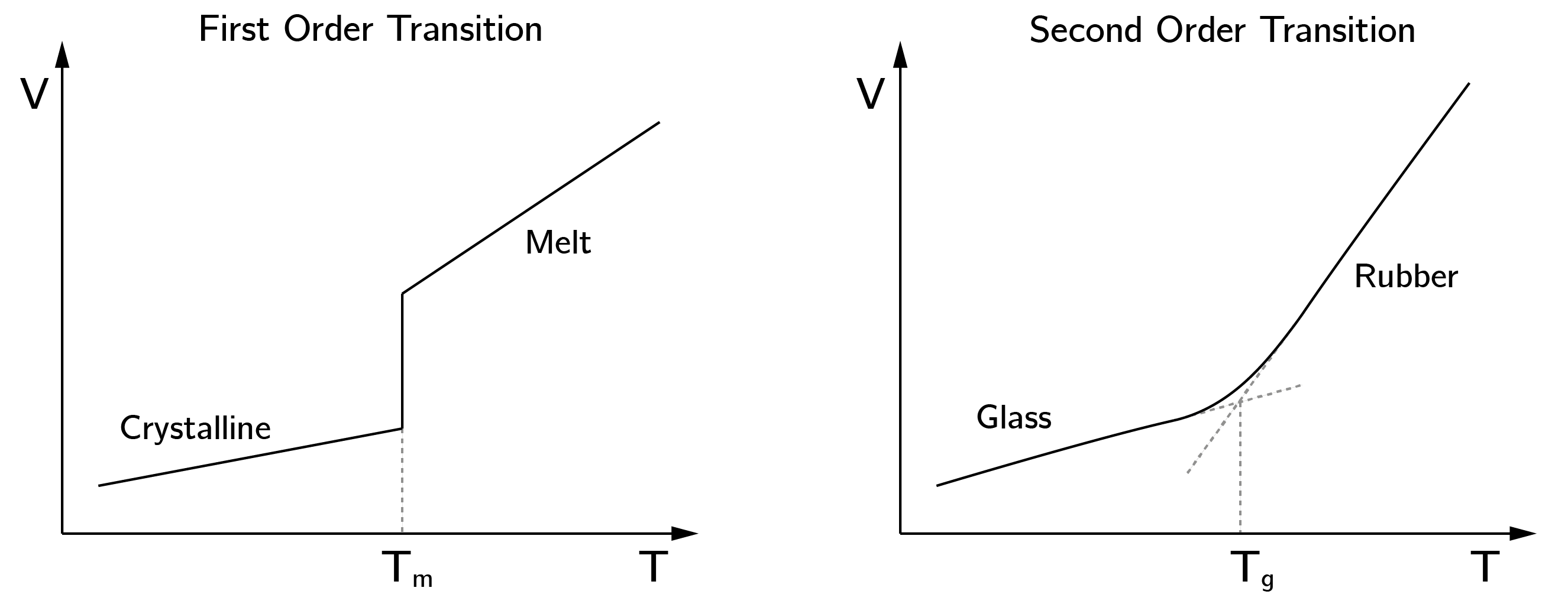

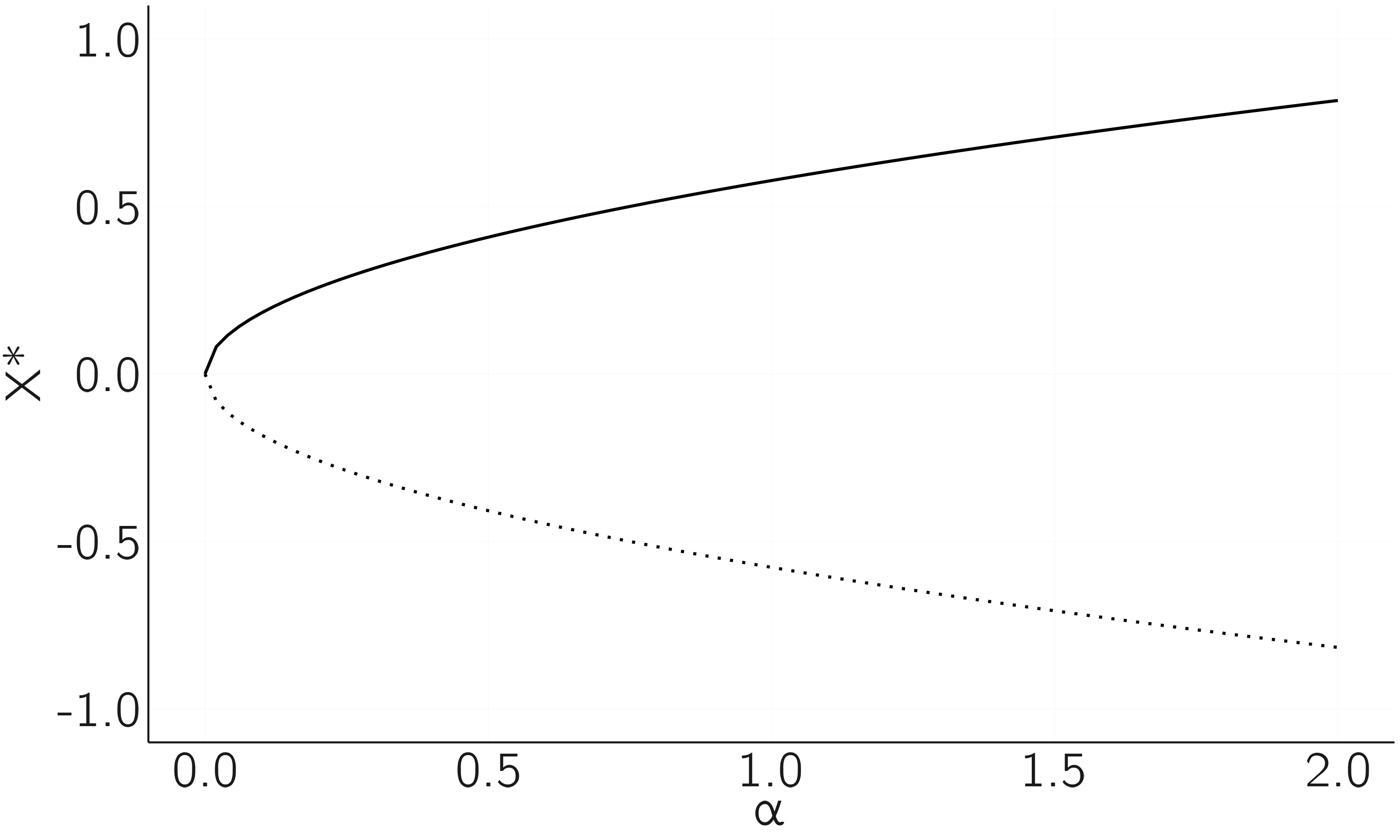

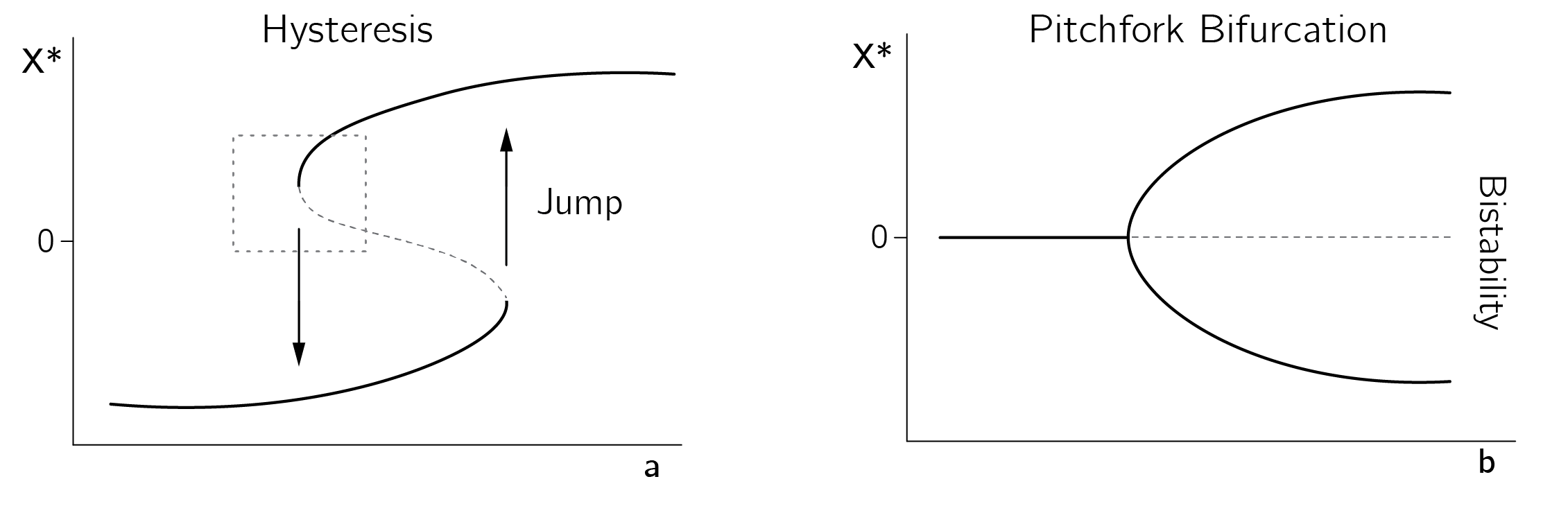

We saw something similar for the logistic map when \(r\) crossed the boundary at \(r = 1\). However, this did not lead to a sudden change in \(X^{*}\). This is often called a second-order phase transition, meaning that the configuration of stable and unstable points changes, but there is no discontinuous change in behavior (figure 3.1).2

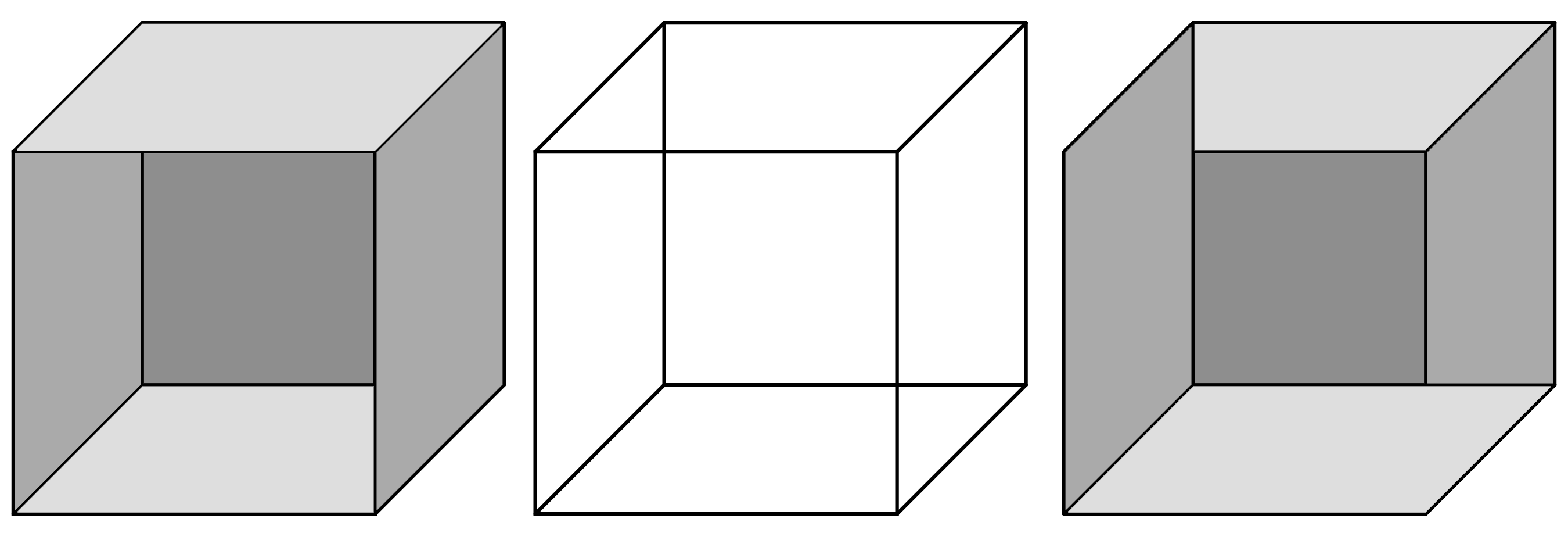

Discontinuous phase transitions such as melting and freezing occur in many systems. Famous examples from the natural sciences include collapsing bridges, capsizing ships, cell division, and climate transitions such as the onset of ice ages. Examples from the social sciences include conflict, war, and revolution. Some examples from psychology are falling asleep, outbursts of aggression, radicalization, falling in love, sudden insights, relapses into depression or addiction, panic, and multistable perception. The perception of the Necker cube is a famous example (figure 3.2). Building and testing models of these psychological transitions is challenging but rewarding. These transitions involve large changes in behavior, in contrast to the smaller, often marginally significant effects typically observed in psychological intervention studies.

3.3 Bifurcation and Catastrophe theory

Bifurcations occur when equilibria disappear, appear, or split. Simply put, bifurcation theory studies how small changes in parameters or conditions can lead to large changes in outcomes in mathematical systems.

Catastrophe theory can be viewed as a branch of bifurcation theory, describing a subclass of bifurcations. It was developed by René Thom (1977) and popularized by Christopher Zeeman (1976). The reason I chose to focus on catastrophe theory in this chapter is fourfold: First, it provides one of the few systematic treatments of bifurcations. A systematic treatment is more effective than simply listing all types of bifurcations. Second, once you have a grasp of the basics of catastrophe theory, it becomes easier to learn about other bifurcations not encompassed by this theory. Third, it is the most widely used approach in psychology and the social sciences. Finally, the field has developed an empirical program and statistical procedures for the practical application of catastrophe theory.

Catastrophe theory is concerned with gradient systems. These are dynamic systems that can be described by a potential function. Potential functions can be thought of as landscapes with minima and maxima in which we throw a ball and see where it ends up. The simplest case, discussed in the next section, is the quadratic minimum. We can also study what happens to the ball if the landscape changes shape smoothly and a minimum disappears. Then sudden jumps can occur.

Minima and maxima are called critical points, points where the first derivative of the potential function is 0. Catastrophe theory analyzes so-called degenerate critical points of the potential function. Phase transitions can occur at these bifurcation points. Thom proved that there are only seven fundamental types of catastrophes (given a limited set of control parameters). I will start with a mathematical introduction and, after explaining the main concepts, give some psychological examples. An in-depth discussion of the role of potential functions in catastrophe theory can be found in the introduction of chapter 1 of Gilmore (1993).

3.3.1 The quadratic case

Thom’s theorems are known to be highly complicated, but the basic concepts are not that difficult to grasp. The simplest potential function is

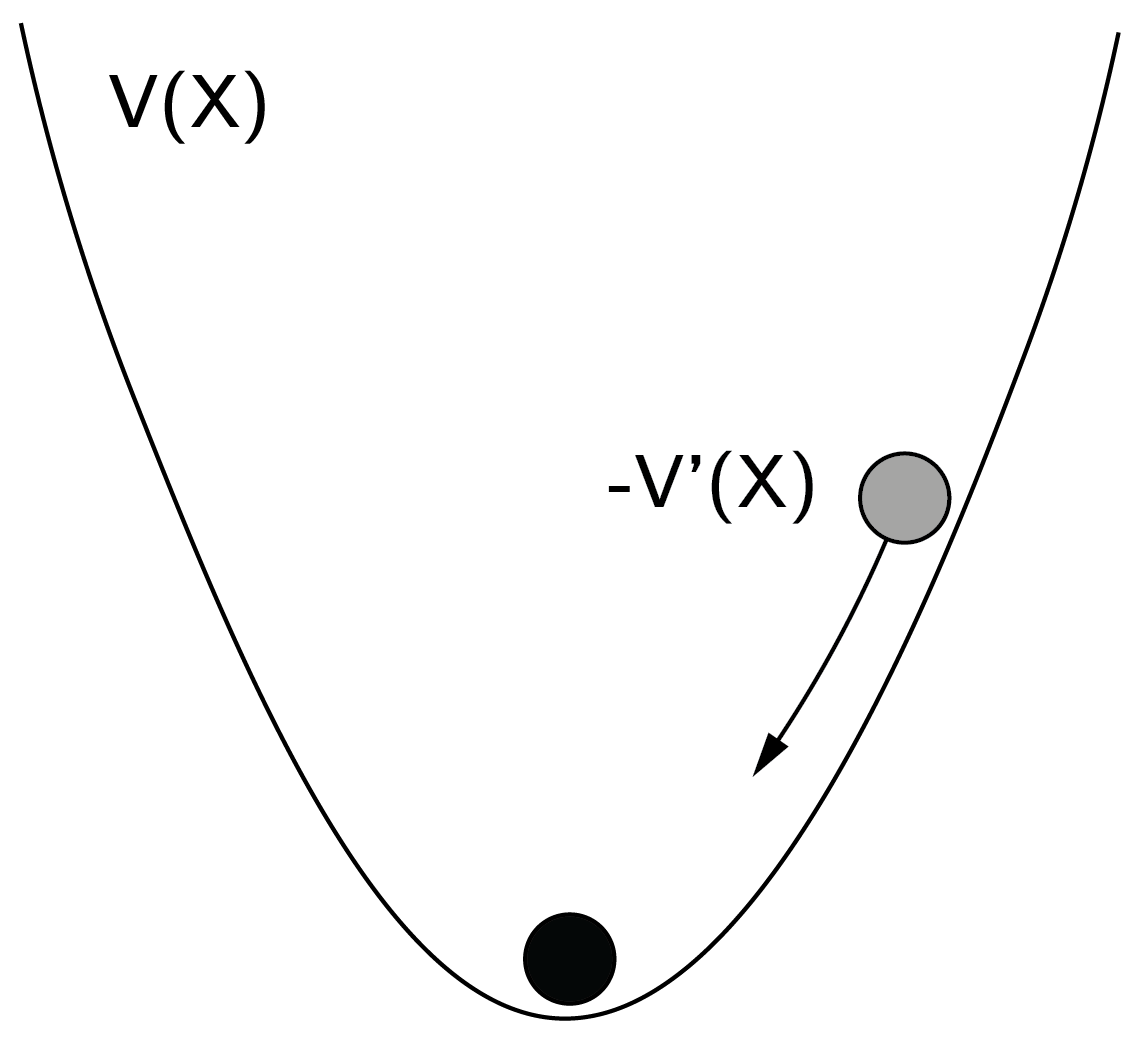

\[ V(X) = X^{2}. \tag{3.1}\]

Imagine a ball in a landscape. The ball will roll to the minimum of the potential function (figure 3.3). We learned in school that this is the point where the first derivative is 0 and the second derivative is positive. The first and second derivatives are \(V^{'}(X) = 2X\) and \(V^{''}(X) = 2\), respectively. At \(X = 0\) we find the minimum.

The potential function describes a dynamical system defined by

\[ \frac{dX}{dt} = - V^{'}(X). \tag{3.2}\]

This makes sense. When the ball is in \((1,1)\), \(- V^{'}(X) = - 2\) and the ball will move toward \(X = 0\). But if \(X = 0\), \(- V^{'}(X) = 0\), and the ball will not move anymore. In the case of the quadratic potential function, there is only one fixed point. By adding parameters and lower order terms to \(V\), that is, \({aX + X}^{2}\), we can move its location, but the qualitative form (one stable fixed point) will not change. Also note that the second derivative is positive, which tells us that we are dealing with a minimum and not a maximum (the so-called second derivative test).

Many dynamical systems behave according to this potential function.3 Nothing spectacular happens: no bifurcations and no jumps. This is different when we consider potential functions with higher order terms.

3.3.2 The fold catastrophe

The fold catastrophe is defined by the potential function

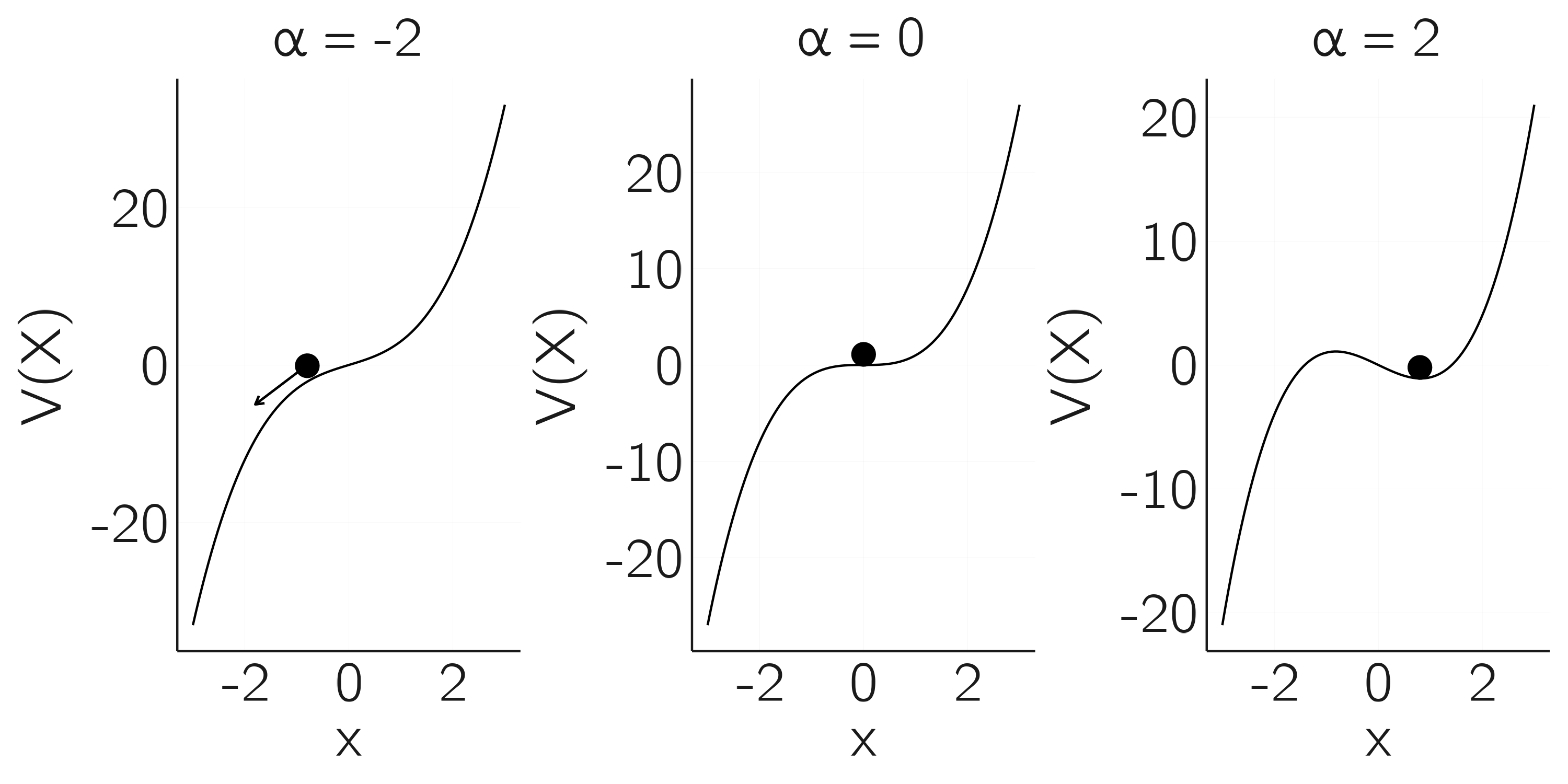

\[ V(X) = {- aX + X}^{3}. \tag{3.3}\]

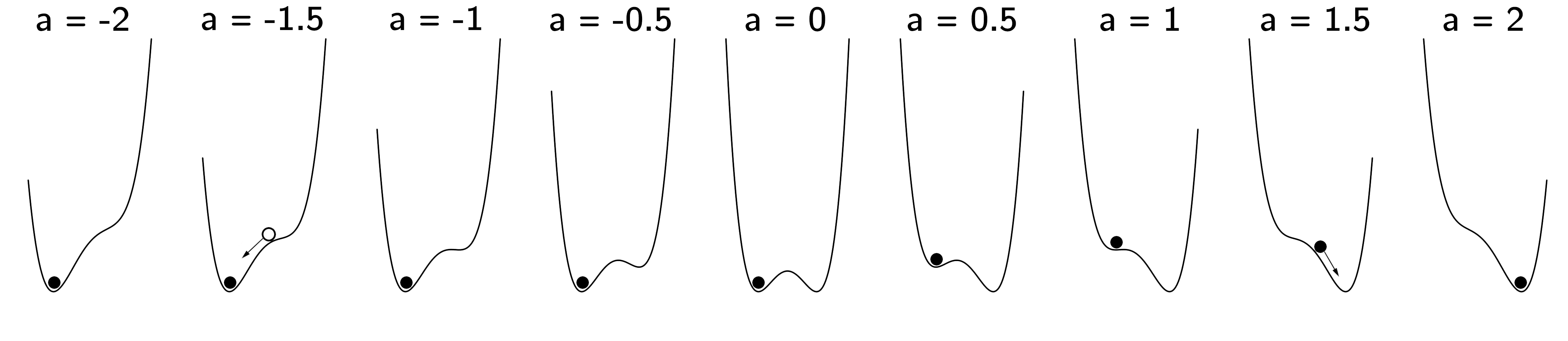

This function has a degenerate critical (bifurcation) point at \(X = 0, a = 0\), because at this point \(V^{'}(X) = - a + 3X^{2} = 0\) and \(V^{''}(X) = 6X = 0\), so both the first and second derivative are 0. What makes this point so special? This is illustrated in figure 3.4.

In the left plot, \(a < 0\) and there is no fixed point; the ball rolls away to minus infinity. This can be checked by setting the first derivative to zero, which gives \(X = \pm \sqrt{{a}/{3}}\). For negative \(a\) there is no solution. A positive value of \(a\) gives two solutions, as shown on the right for \(a = 2\). The positive solution \(X = \sqrt{2/3}\) is a stable fixed point because the second derivative in this point is positive. The negative solution \(X = - \sqrt{2/3}\) is an unstable fixed point because the second derivative in this point is negative.

The middle figure depicts the case just in between these two cases. Here the equilibrium is an inflection point, a degenerate critical point. The bifurcation occurs at this point as we go from a landscape with no fixed points to one with two, one stable and one unstable.

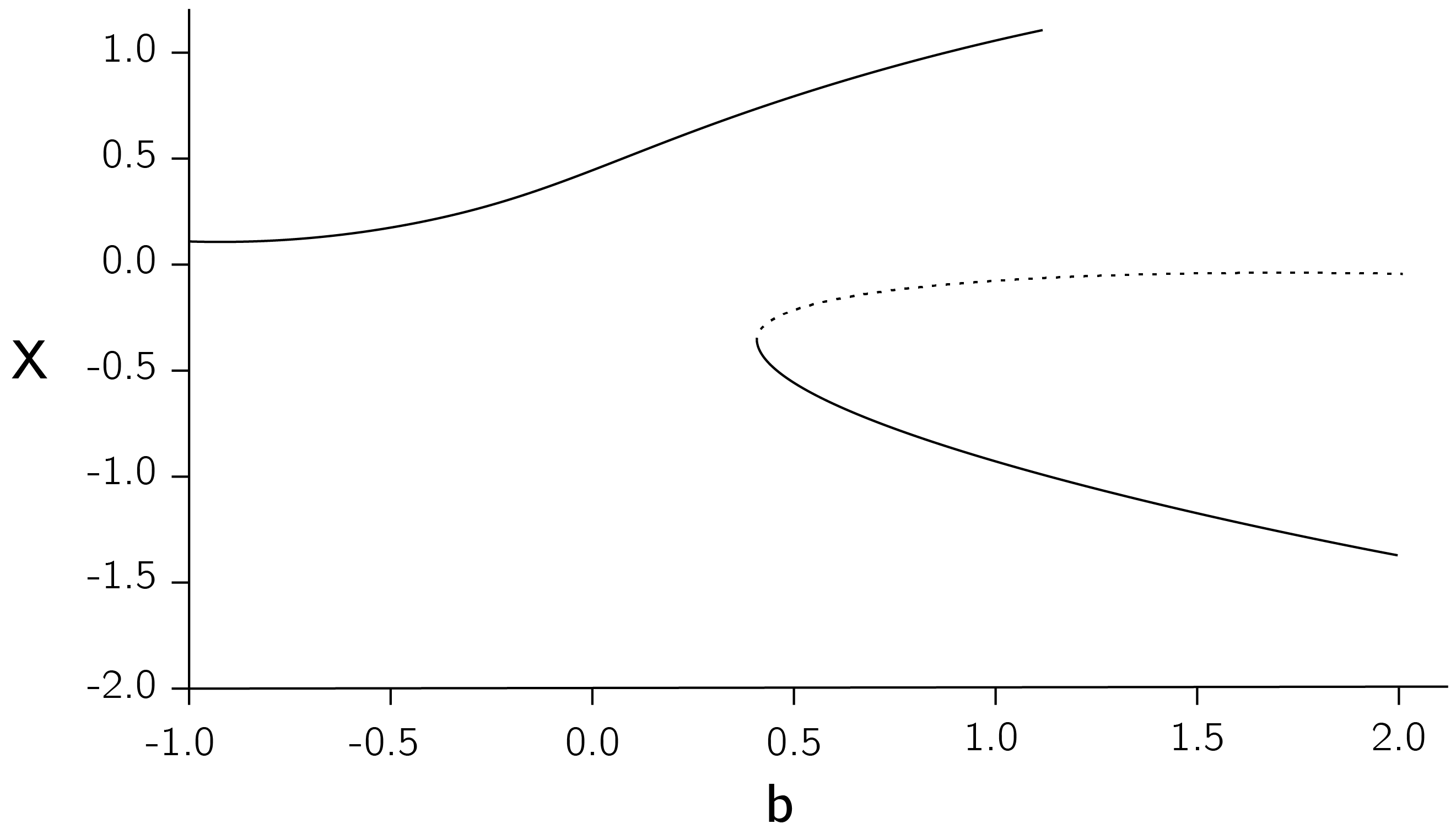

Another way to visualize this is by making a bifurcation diagram as we did for the logistic map in Chapter 2. On the x-axis we put \(a\), from \(0\) to \(2\). On the y-axis we plot \(X^{*}\), the fixed points of equation 3.3. We use lines for stable fixed points and dashed lines for unstable points. The diagram is shown in figure 3.5.

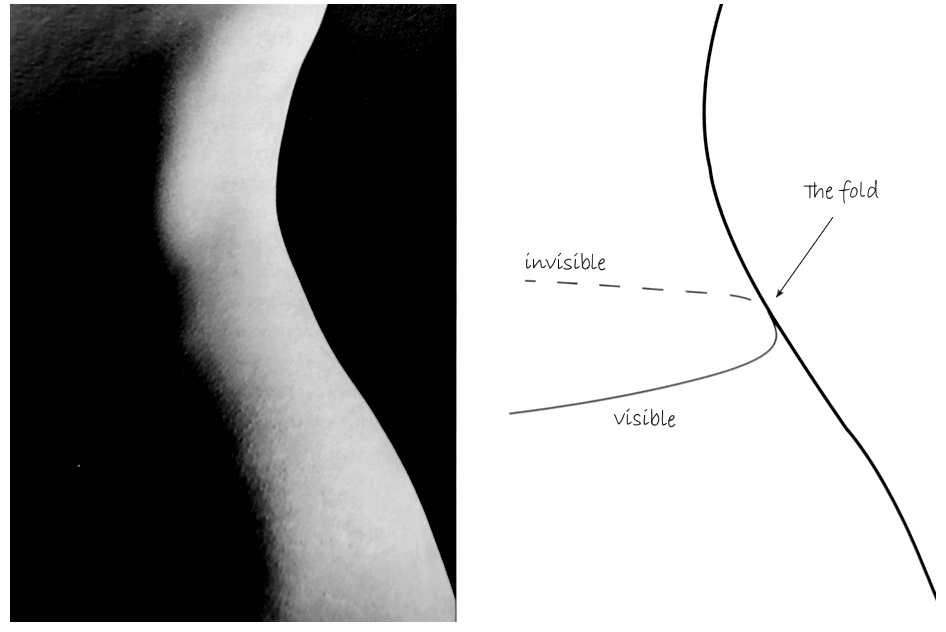

This bifurcation diagram may not look as spectacular as the logistic map, but its importance cannot be overstated. The fold is everywhere! In a fascinating book, The Seduction of Curves, Allen McRobie (2017) shows that whenever we see an edge, we see a fold. Figure 3.6 is from the book, where he demonstrates how different catastrophes appear in art. I also recommend his YouTube lecture.4

The fold catastrophe has been studied in fields from evolution theory (Dodson and Hallam 1977) to buoyancy in diving (Güémez, Fiolhais, and Fiolhais 2002). In addition, higher-order catastrophes are composed of folds.

3.3.3 The cusp catastrophe

The cusp, the best-known catastrophe, is the simplest catastrophe showing sudden jumps in behavior. The potential function of the cusp is

\[ V(X) = - aX - \frac{1}{2}bX^{2} + \frac{1}{4}X^{4}. \tag{3.4}\]

The half and quarter are added to make later derivations a little easier. The highest power is now 4. The first two terms contain the control variables \(a\) and \(b\), known as the normal and splitting variables. You might ask why there is no third order term. The nontechnical answer is that such a term would not change the qualitative behavior of the bifurcation. Catastrophe theory studies bifurcations that are structurally stable, meaning that perturbing the equations (and not just the parameters) does not fundamentally change the behavior (see Section 3.3.6 and Stewart (1982) for further explication).

I advise you to do some minimal research on this equation yourself, using an online graphic calculator tool like Desmos or GeoGebra (paste f(X)=-a X-(1/2) b X^2+(1/4) X^4). For example, set \(a = 1\) and \(b = 3\) and look at the graph of the potential function. Think in terms of the ball moving to a stable fixed point. What you should see is that there are three fixed points, of which the middle one is unstable. This bistability is important. Again, there is a relationship to unpredictability. Although you know the potential function and the values of \(a\) and \(b\), you are still not sure where the ball is. It could be in either of the minima.

Other typical behavior occurs when we slowly vary \(a\ (\)up and down from -2 to 2), for a positive \(b\) value (\(b = 2).\) This is shown in figure 3.7. At about \(a = 1.5\) we see the sudden jump. The left fixed point loses its stability and the ball rolls to the other minimum.

Now consider what will happen if we decrease \(a\) from 2 to -2. In this case, the ball will stay in the right minimum until \(a = - 1.5\). Where the jump takes place depends on the direction of the change in \(a\), the normal variable. The delay in jumps is called hysteresis. Hysteresis is of great importance in understanding change or lack of change in complex systems. The state in which the ball is the less deep minimum (for \(a = 0.5\) in figure 3.7) is often called a metastable or locally stable state.

In his classic paper on the psychophysical law, Stevens (1957) reports hysteresis in perceptual judgments when properties such as brightness and loudness are systematically varied from low to high and vice versa. In this paper he says: “I’m trying to describe it, not explain it. I am not sure I know how to explain it.” To me the cusp at least partially explains why hysteresis occurs.

Gilmore (1993) made an important point about noise in the system. If there is a lot of noise, the jumps occur earlier and we see less or no hysteresis effect. This is called the Maxwell convention as opposed to the “delay” convention. Demonstrating hysteresis therefore requires precise experimental control.

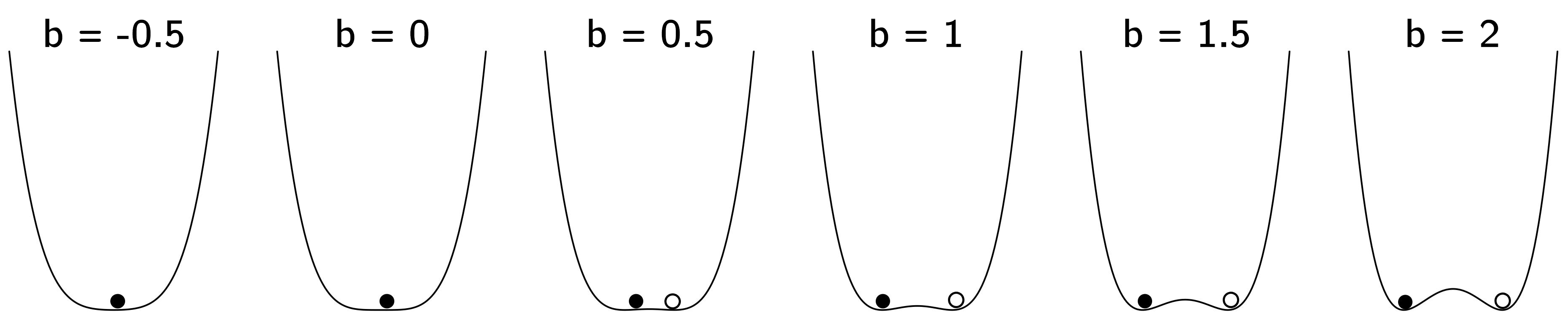

Another very interesting pattern occurs when \(a = 0\) and \(b\) is increased (figure 3.8).

For low \(b\) there is one stable fixed point that becomes unstable. It splits up into two new stable attractors. As we did for the fold, we can make bifurcation diagrams showing the equilibria of \(X\) as a function of \(a\) and \(b\). Along the \(a\)-axis we see hysteresis and along the \(b\)-axis we see divergence or what is often called a pitchfork bifurcation (figure 3.9).

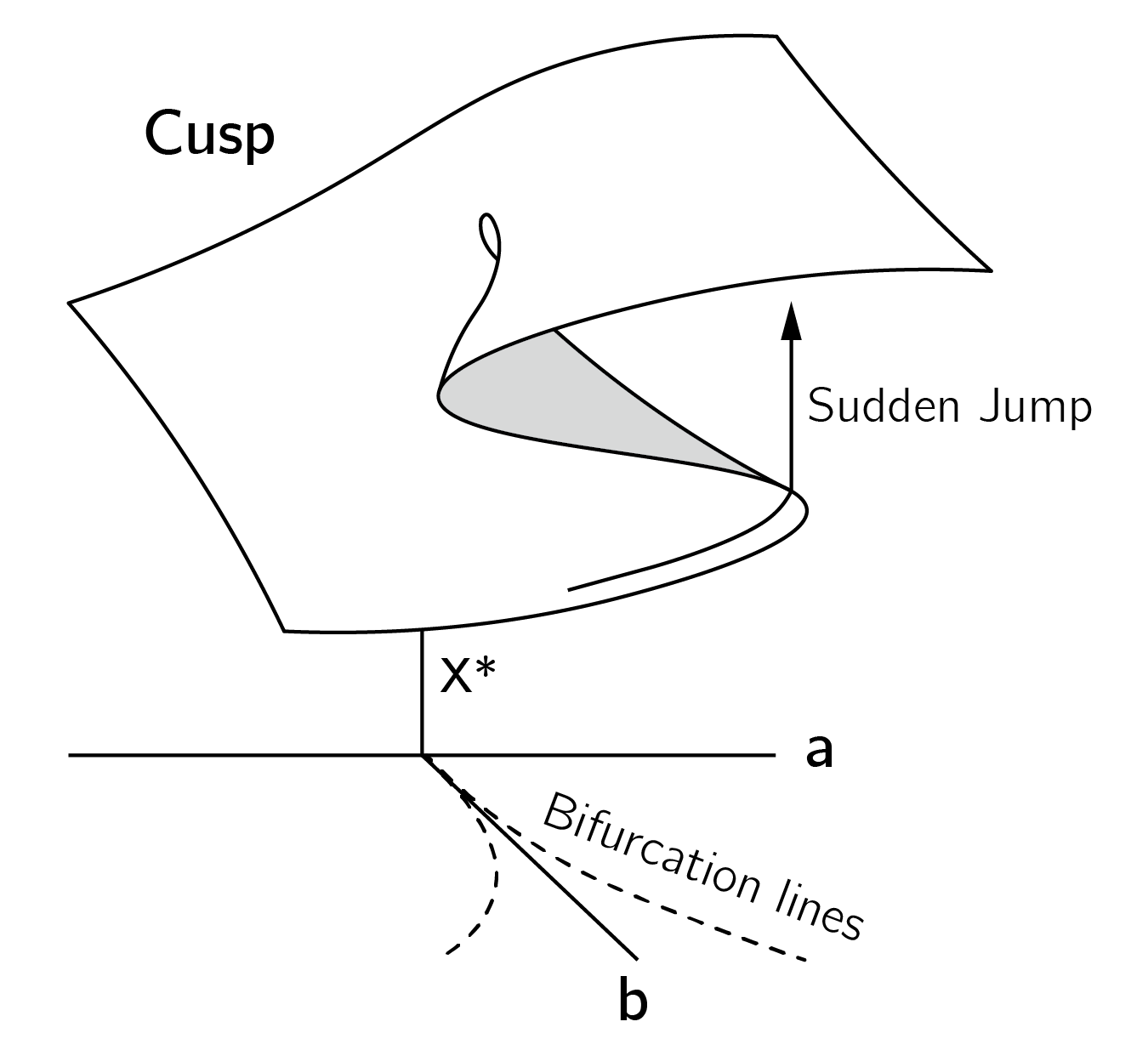

Depicting the combined effects of \(a\) and \(b\) requires a three-dimensional plot, which combines the hysteresis and pitchfork diagrams (figure 3.10).

The cusp diagram can be expressed mathematically by setting the first derivative to 0:

\[ V^{'}(X) = {- a - bX + X}^{3} = 0. \tag{3.5}\]

This type of equation is called a cubic equation.5 The degenerate critical points of the cusp can be found by setting the first and second derivative to 0. This is just within reach of your high school mathematics training, and I leave this as an exercise. The result is:

\[ 27a^{2} = 4b^{3}. \tag{3.6}\]

This equation defines the bifurcation lines where the first and second derivatives are both 0 and sudden jumps occur (see figure 3.10). The region between the bifurcation lines is the bifurcation set. In this region, the cusp has three fixed points, the middle of which is unstable. These unstable states in the middle are called the inaccessible area, shaded gray in the cusp diagram. The bifurcation lines meet at (0,0,0). At this point, the third derivative is also 0. This is the cusp point.

3.3.4 Examples of cusp models

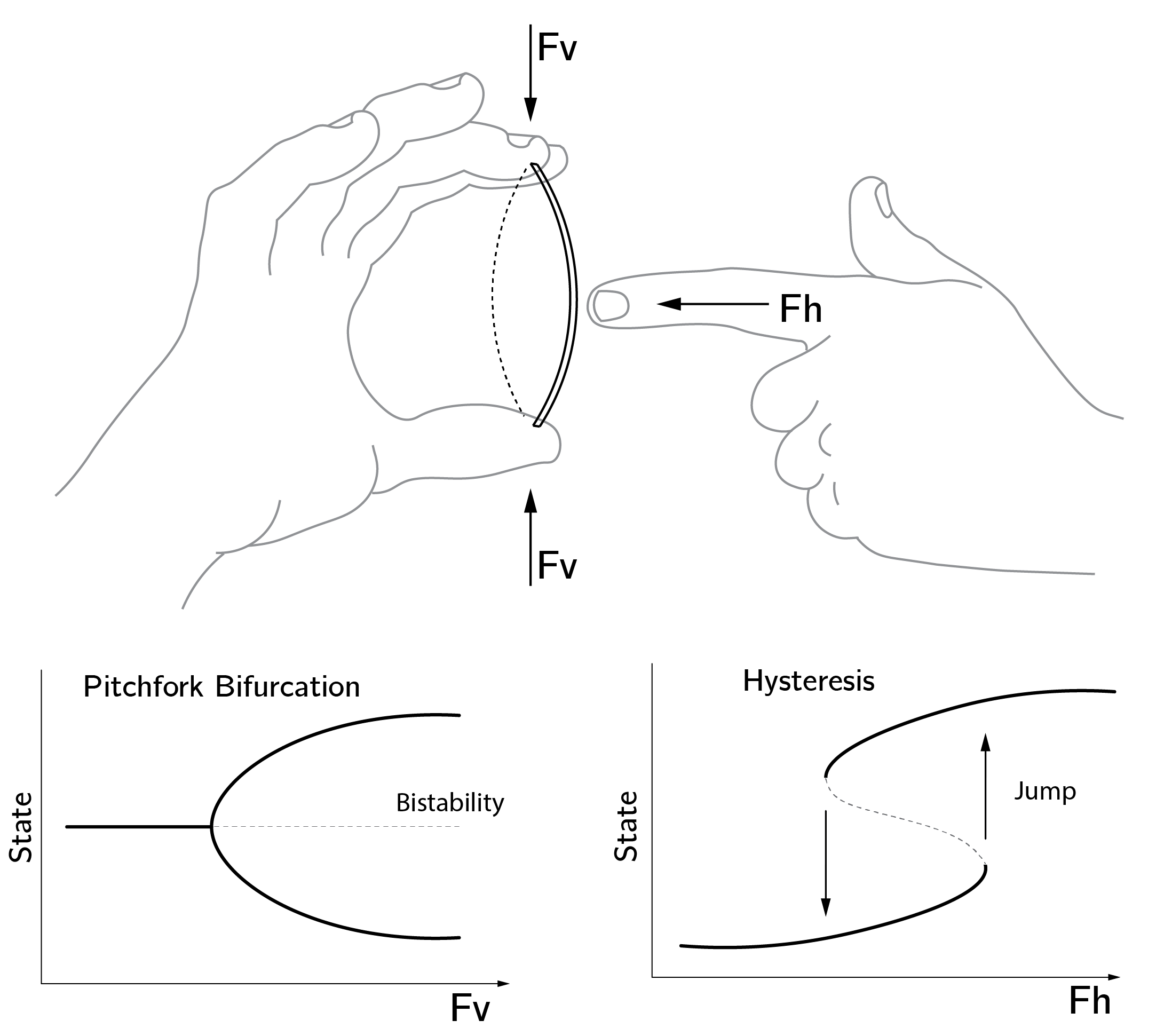

To illustrate the cusp, I always use the business card (figure 3.11). I recommend that you test this example (not with your credit card). You can play with two forces. \(Fv\) is the vertical force and the splitting control variable (\(b\)) in the cusp. \(Fh\) is the horizontal force and the normal variable (\(a\)) in the cusp. Note that you will only get smooth changes when \(Fv = 0\), but sudden jumps and hysteresis when you employ vertical force. One very important phenomenon is that the card has no “memory” when \(Fv = 0\). You can push the card to a position, but as soon as you release this force (\(Fh\) back to 0), the card moves back to the center position. This is not the case with vertical pressure. If we force the card to the left or right position, it will stay there, even if we remove the horizontal force. The card has a memory.

This seems simple, but the mathematical analysis of such elastic bending structures is a huge topic in itself (Poston and Stewart 2014). The freezing of water is also a cusp. As an approximation, we could say that the density of water is the behavioral variable, temperature is the normal variable, and pressure acts as a splitting variable (see chapter 14 of Poston and Stewart (2014), for a more nuanced analysis). It is very instructive to study the full phase diagram of water (figure 3.12). It can be viewed as a map of the equilibria. This type of mapping would be extremely useful in psychology and the social sciences.

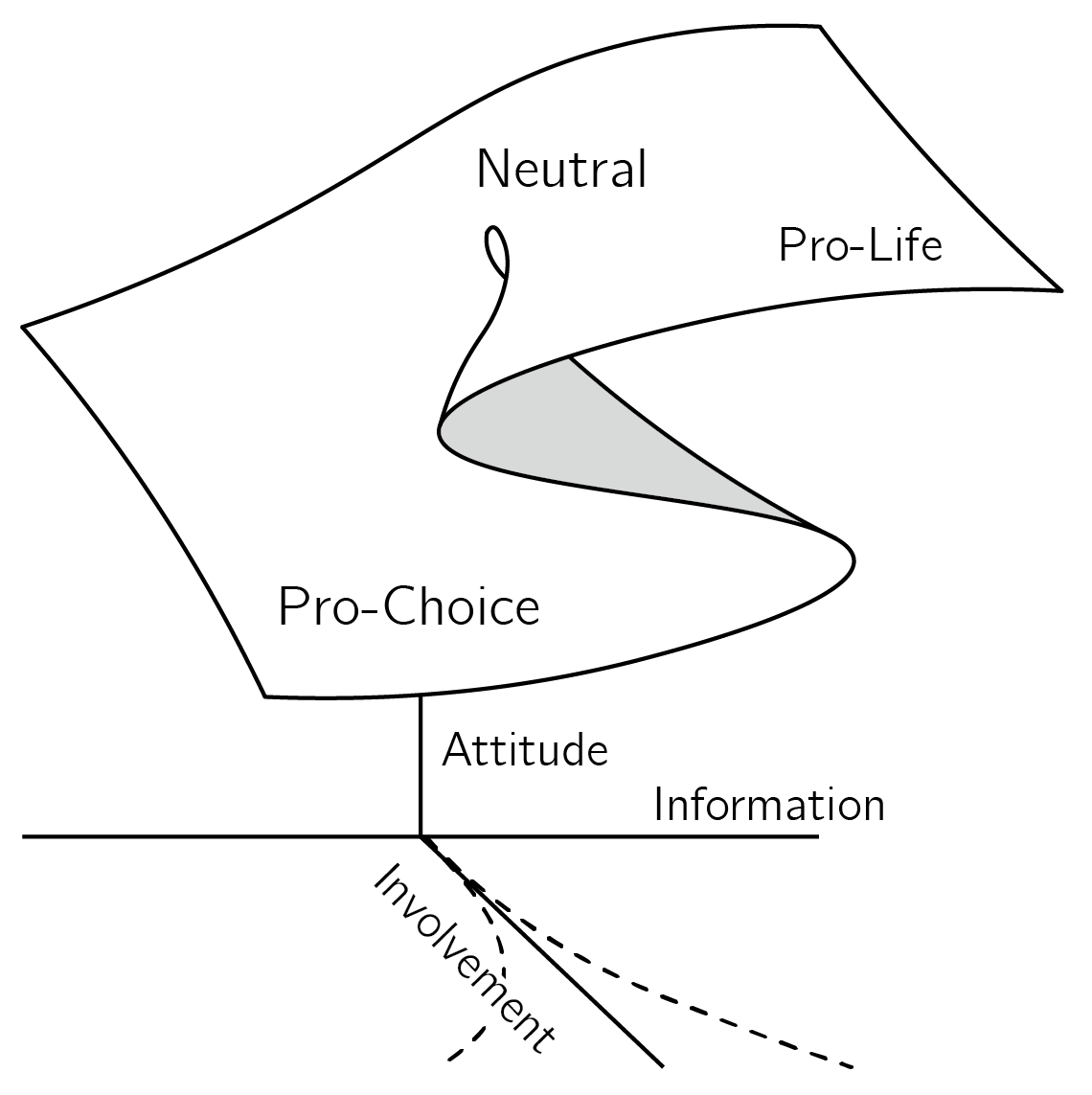

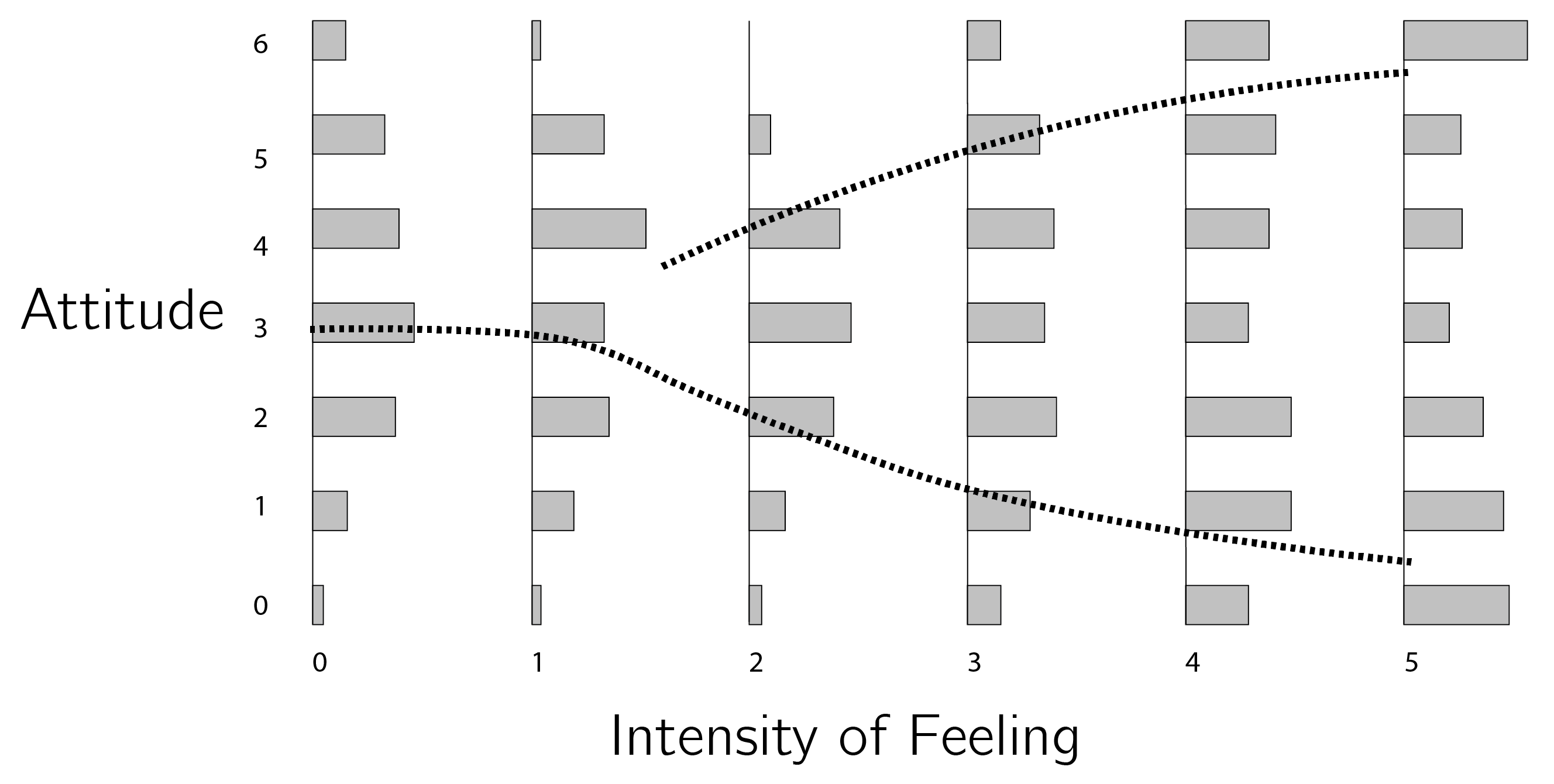

A psychological example of a cusp concerns sudden jumps in attitudes (Latané and Nowak 1994; van der Maas, Kolstein, and van der Pligt 2003). Attitudes will be discussed in much more detail in later chapters. In general, we have relatively stable attitudes toward many things in life (politics, snakes, hamburgers, and sports), but sometimes they change, and in rare cases they change radically. For example, you may suddenly become a conspiracy theorist, an atheist, or a vegetarian. One example is the attitude toward abortion (figure 3.13).

Cusp modeling begins by defining the states of the behavioral variable. In this case, the two states of the bistable cusp are the two opposing positions, pro-life6 and pro-choice. The other state associated with \(a = 0, b = 0\) is the neutral state, an “I don’t know” or “I don’t care” position.

The normal (\(a\)) and splitting (\(b\)) variable are interpreted as information and involvement. Information is a collection of factors that influence whether people tend to be in the pro-life or pro-choice position. Political and religious orientation as well as personal experiences add to this overall factor. One way to construct this information variable is through a factor analysis or principal component analysis.

The splitting factor, involvement, also combines a number of effects (importance, attention). The main idea is that there are two types of independent variables. Some will work (mainly) along the normal axis, and some will (mainly) impact the splitting axis.

The implications of this model are that, for low involvement, change is continuous (figure 3.13). Presenting people with some new information supporting the pro-life or pro-choice position will have a moderate effect. One problem, as demonstrated with the business card, is that the uninvolved person has “no memory.” As soon as you stop influencing this person, they drift to the neutral “I don’t care” position. We have another problem when people are highly involved: they experience hysteresis. When this hysteresis effect is large, persuasion just does not work. If you have been involved in political discussions, you have probably experienced that yourself.

But if the underlying change in information is large enough, attitudes can show a sudden jump. If they are central attitudes, they can be major life events. There is a lot of anecdotal evidence for such transitions (Ebaugh 1988), but it is very hard to capture such an effect in actual time series of attitude measures. Another effect that is consistent with the cusp model is ambivalence. Ambivalence is associated with high involvement. Highly involved people with balanced information (\(a = 0)\), may oscillate between extreme positions (see figure 4.2). Finally, the pitchfork bifurcation can explain issue or political polarization.

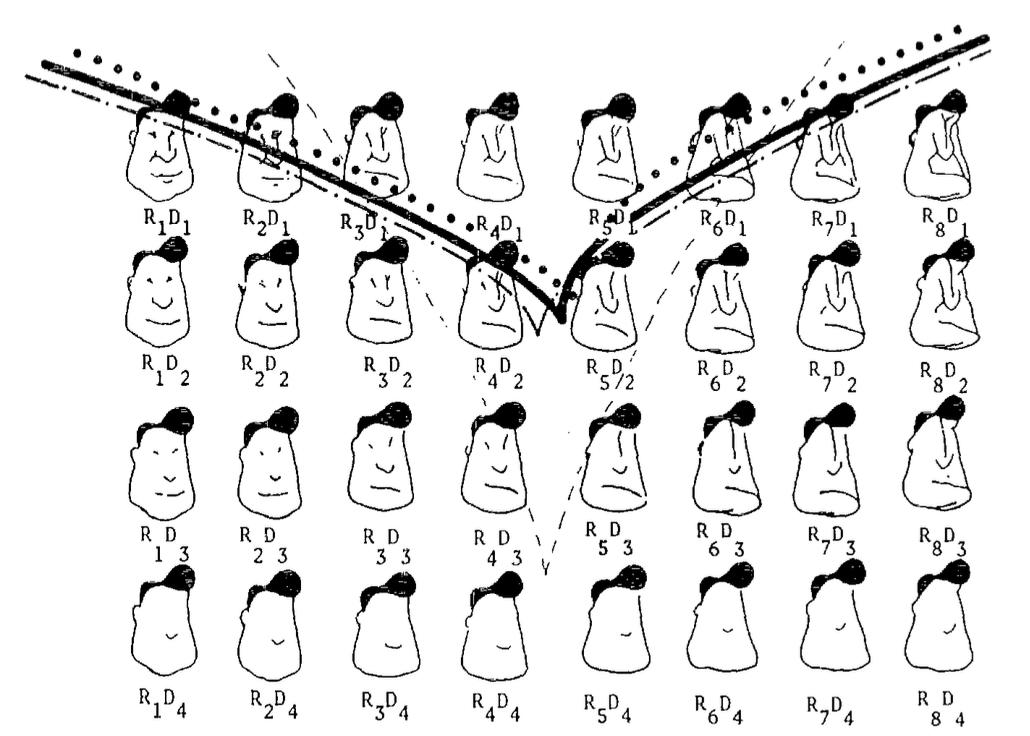

Another psychological example of the cusp-like behavior is multistable perception. Stewart and Peregoy (1983) proposed a model in which the perception of male face or female figure is used as a behavioral variable, the splitting variable is the amount of detail, and the normal variable is a change in detail related to the male/female distinction. The results are shown in figure 3.14.

3.3.5 Higher-order catastrophes

Note that the cusp is made up of folds. This is best seen in the hysteresis diagram in figure 3.9 (see the dotted rectangle). Higher order catastrophes yield elements of cusps and folds. The swallowtail catastrophe with potential function \(V(X) = {- aX - \frac{1}{2}bX^{2} - \frac{1}{3}cX}^{3} + \frac{1}{5}X^{5}\) consists of three surfaces of fold bifurcations meeting in two lines of cusp bifurcations, which in turn meet in a single swallowtail bifurcation point. We need a four-dimensional space to visualize this, which is difficult. The Wikipedia page on catastrophe theory has some rotating graphs that may help. The butterfly catastrophe has \(X^{6}\) as the highest term (and four control variables).

I will discuss this catastrophe in Section 6.3.3.5 in relation to modeling attitudes.7 Other catastrophes have two behavioral variables, not one. However, the vast majority of applications of catastrophe theory focus on the cusp, which will also be the focus of the remainder of this chapter. There are many good (but not easy) books that present the full scope of catastrophe theory (Gilmore 1993; Poston and Stewart 2014).

3.3.6 Other bifurcations

In contrast to bifurcation theory, catastrophe theory is limited to structurally stable, local bifurcations.

Examples of nonstructuraly stable local bifurcations are the transcritical bifurcation (\(\frac{dX}{dt} = aX - X^{2})\) and pitchfork bifurcation (\(\frac{dX}{dt} = bX - X^{3})\). The pitchfork is part of the cusp and is not structurally stable because it can be perturbed by an additional term \(a\), which, if unequal to 0, will distort the pitchfork (see figure 3.15).

Another one we have already seen is the period doubling bifurcation. This happened in the logistic map when the fixed point changed in a limit cycle of period 2. Finally, global bifurcations cannot be localized to a small neighborhood in the phase space, such as when a limit cycle diverges (Guckenheimer and Holmes 1983). However, I don’t know of any applications of global bifurcations in psychology or the social sciences.

3.4 Building catastrophe models

3.4.1 Mechanistic models

The model of the attitude toward abortion is called a phenomenological model, as opposed to a mechanistic model.

The mechanistic approach is much more common in the physical and life sciences. An example is the phase transition in water described by the van der Waals equation. Poston and Stewart (2014) show how the van der Waal equation can be reparametrized to take the form of the cusp equation. The advantage is that we learn how temperature and pressure are related to the control variables of the cusp. This gives us a full understanding of the dynamics of this phase transition.

One model that I will use as a psychological model in Chapter 4, Section 4.3.7, is the model of the spruce budworm outbreak, which occurs every 30 to 40 years and results in the defoliation of tens of millions of hectares of trees (Ludwig, Jones, and Holling 1978). The model is

\[ \frac{dN}{dt} = r_{b}N\left( 1 - \frac{N}{K} \right) - \frac{BN^{2}}{A^{2} + N^{2}}. \tag{3.7}\]

Where \(N\) is the size of budworm population, \(r_{b}\) is the growth rate, \(K\) is the carrying capacity, \(B\) is the upper limit of predation, and \(1/A\) is the responsiveness of the predator.

The first part is the logistic growth equation. \(N\) will grow to \(K\) at a rate \(r_{b}\). Note that this is a differential equation, not a difference equation. There is no chaos in logistic growth in continuous time. The second part is the predation function and has an increasing shape flattening out at \(B\). The curvature of this function is determined by \(A\). High \(A\) makes the function less steep, meaning that predation reacts rather slowly to the increase in budworms (more about the construction of this model later).

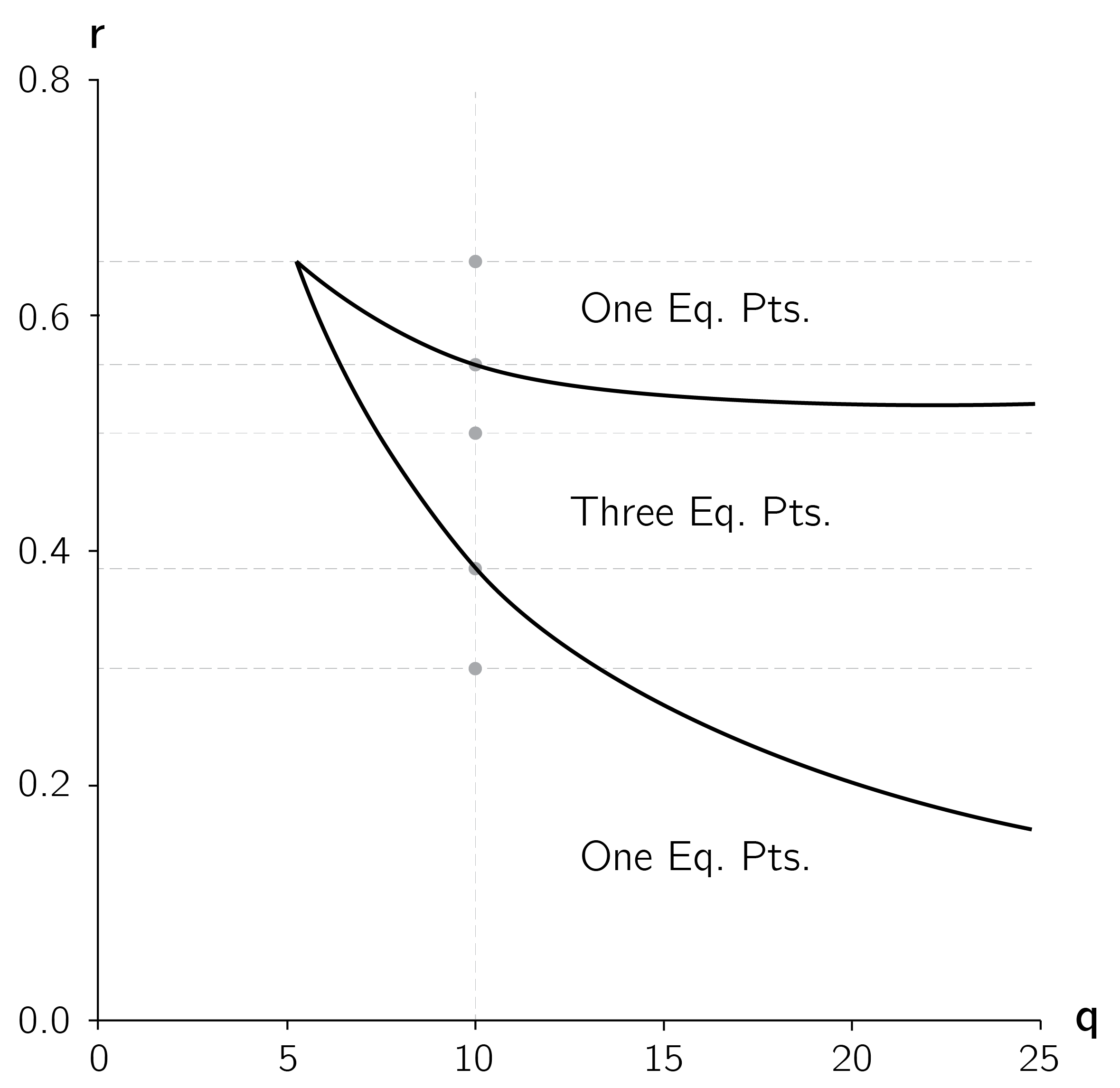

The analytical approach to this model is to reparametrize the model so that it takes the form of a cusp. Such reparameterizations are not so easy to do yourself. The idea is to create a smaller set of new variables that are functions of the model parameters. For this model a convenient reparameterization is

\[ r = \frac{A\ r_{b}}{B}\text{ and}\ q = \frac{K}{A}. \tag{3.8}\]

Using these two “constructed” control variables, we can depict the bifurcation lines of the cusp as in figure 3.16.

In later chapters we will discuss psychological examples of a mechanistic approach, but as far as models of transitions are concerned, these are rare. The phenomenological approach is much more common.

3.4.2 Phenomenological models

The cusp model of attitude is a typical phenomenological model. We simply assume that the cusp is a model of the attitude. Phenomenological models are less convincing than mechanistic models because they do not provide a deep understanding of the underlying mechanisms that drive the system. But in psychology and the social sciences, we cannot be too picky. Compared to many other, verbally stated attitude models, the cusp attitude model is quite precise. It implies a number of phenomena and is testable.

Setting up a phenomenological model is not a trivial task. I suggest some guidelines for this. First, define the behavioral variable. It is important to think about the bistable modes. What are they? What is the inaccessible state in between? Can you have jumps between these states? What is neutral state at the back of the cusp? If you cannot answer these questions, you should reconsider whether a cusp is an appropriate model.

Second, select the control variables. What could be a normal variable and what could be a splitting variable? These are not easy questions. Sometimes there are too many candidates. For the cusp model of attitudes, instead of involvement, we could suggest interest, importance, emotional value, etc. In this case, I think of the splitting axis as a common factor of all these slightly different variables. In other cases, we have no good candidates. In the example in figure 3.14, it is not clear exactly what is being manipulated along the normal axis. If you made a choice, it is good to check whether, at high values of the splitting values, variation of the normal variable may lead to sudden jumps and hysteresis. Also check whether the pitchfork bifurcation makes sense theoretically.8

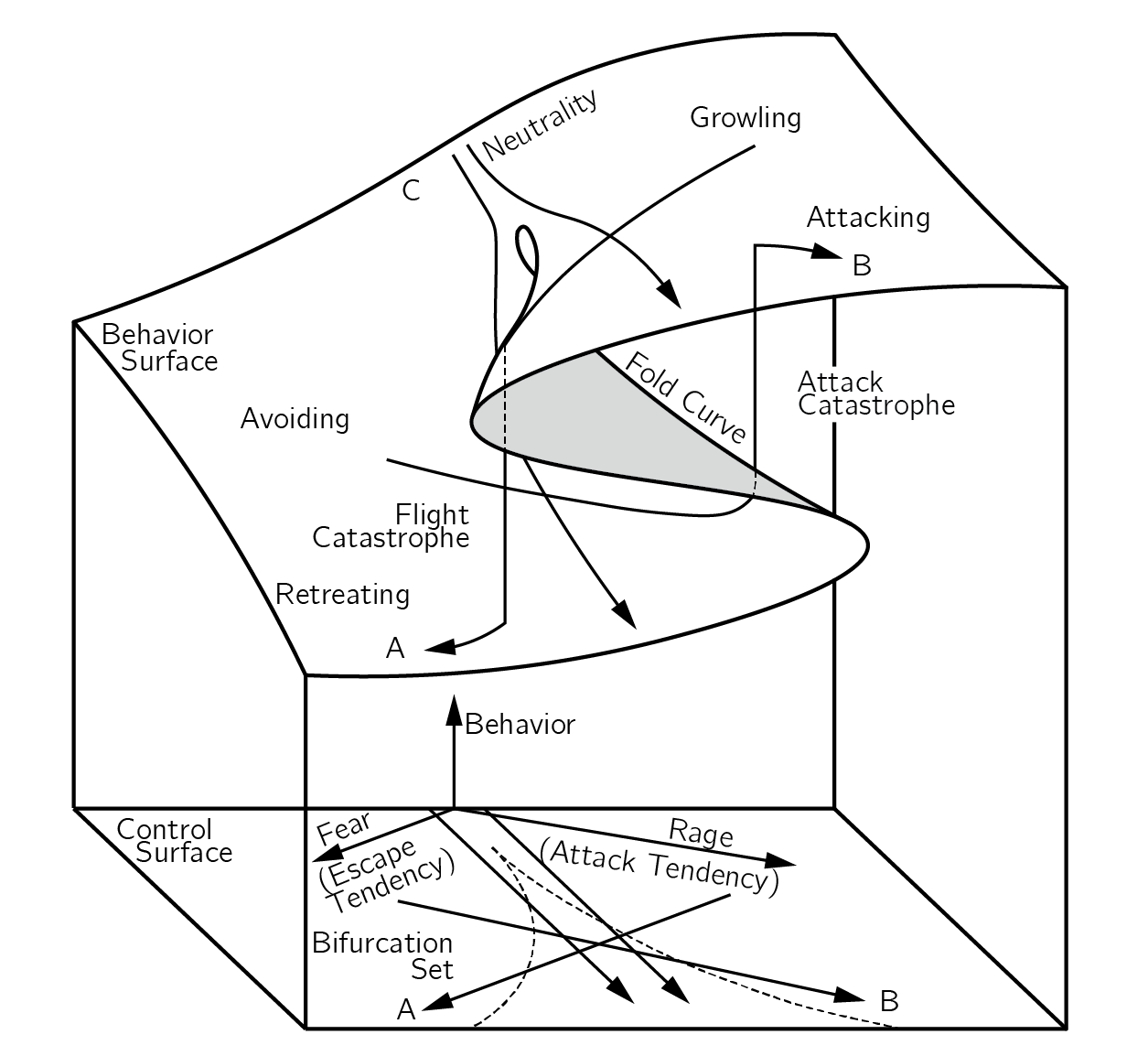

There is another issue here. In some phenomenological models, the control variables are rotated by 45 degrees. The most famous example is Zeeman’s (1976) model of dog aggression (figure 3.17).

The control variables are fear and rage. In such a rotation the normal variable is the difference between fear and rage, while the splitting variable is the sum of fear and rage. Another example can be found in our model of the speed-accuracy trade-off in reaction time tasks (Dutilh et al. 2011). When constructing a phenomenological model, these two options for defining the control variables should be considered.

To explain catastrophic drops in performance in work and sports, Hardy and Parfitt (1991) proposed a cusp model with cognitive anxiety as the splitting factor and physiological arousal as the normal factor. The idea is that at high levels of cognitive anxiety, increases and decreases in arousal lead to sudden changes, including a hysteresis effect. Hardy (1996) presents further tests of this model, which has been criticized by Cohen, Pargman, and Tenenbaum (2003). Extensions to the butterfly model are presented in Guastello (1984) and Hardy, Woodman, and Carrington (2004).

Cusp models have also been developed for addiction (Guastello 1984; Mazanov and Byrne 2006). Witkiewitz et al. (2007) propose using distal risk as the splitting axis and proximal risk as the normal axis. The model is tested using the renowned dataset from Project MATCH, an eight-year, multisite investigation of the effectiveness of various treatments for alcoholism.

As a final example, I mention the model for humor presented by Paulos (2008) in his fascinating book on mathematics and humor. Paulos explains his model in the context of puns. His example is: “Do you consider clubs appropriate for young children?” with the punchline “Only when kindness fails,” which is probably only funny to people with children. Paulos uses the rotated control axis as in the dog aggression model. Interpretation of the pun is the behavioral axis. One axis represents the first meaning of “clubs,” the other axis represents the second meaning. The bifurcation set represents the ambiguous region. A joke involves a jump from one meaning to another. Paulos claims that this cusp model combines cognitive incongruity theory, various psychological theories of humor, and the release theory of laughter. Tschacher and Haken (2023) propose a related complexity account of humor.

3.5 Testing catastrophe models

3.5.1 The catastrophe flags

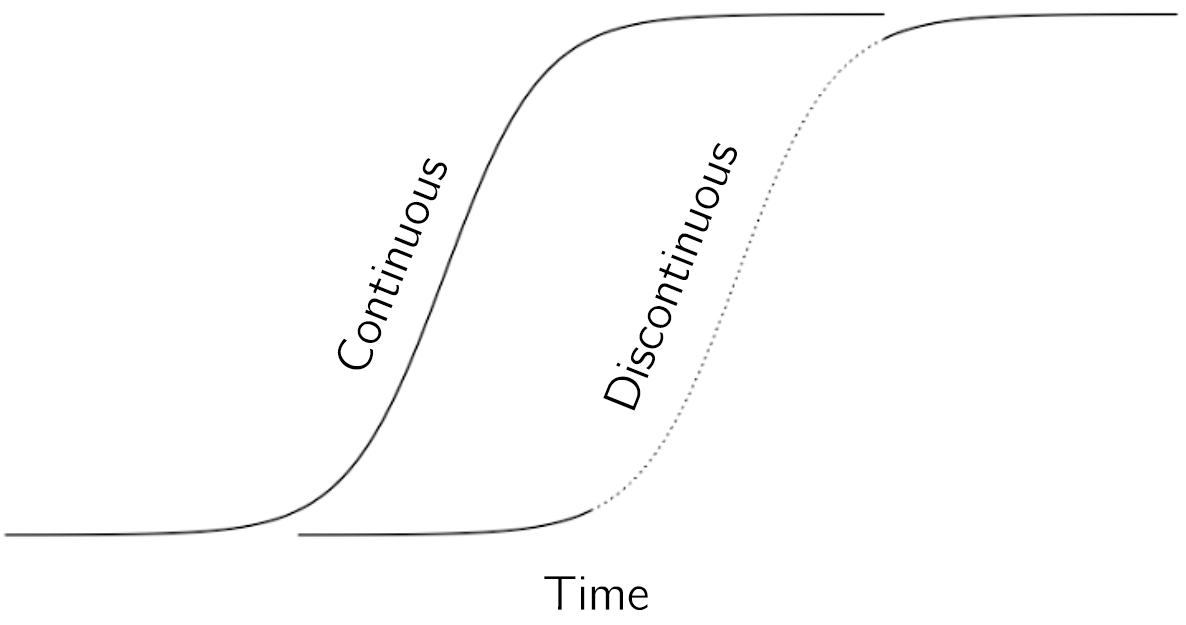

How sudden is sudden? How can climate changes be seen as transitions between stages (i.e., ice ages) when these transitions take hundreds of years? Even when the ball is rolling toward its new minimum, it takes time to roll. But then what is the difference with an continuous acceleration, such as we see in a logistic growth pattern? The time course of an acceleration and a sudden, discontinuous jump may look very similar (figure 3.18).

In fact, in terms of time-series data, they may look exactly the same. The main difference is that in the continuous case the intermediate values are stable. An acceleration can be understood as a quadratic minimum that changes its position quickly. If we stop the process by freezing the manipulated control variable in the process, the state will remain at an intermediate value. These intermediate values are all stable values. If we freeze the manipulated variable in a discontinuous process, it will continue to move to a stable state. In this case, the intermediate state is unstable. The ball keeps rolling and unfortunately the climate keeps changing.

In practice using time series data, this is a difficult distinction to make. It means that simple time series are not sufficient to distinguish accelerations from phase transitions. So how do we distinguish between the two processes? In the context of catastrophe theory, Gilmore (1993) proposed the catastrophe flags. These are cusp-related phenomena that can be seen in the data. While no single one of these is sufficient to indicate the cusp, when considered together they provide compelling evidence for its existence.

In the following subsections, I will define the flags and illustrate their applications in psychology using examples. The first flag is the sudden jump.

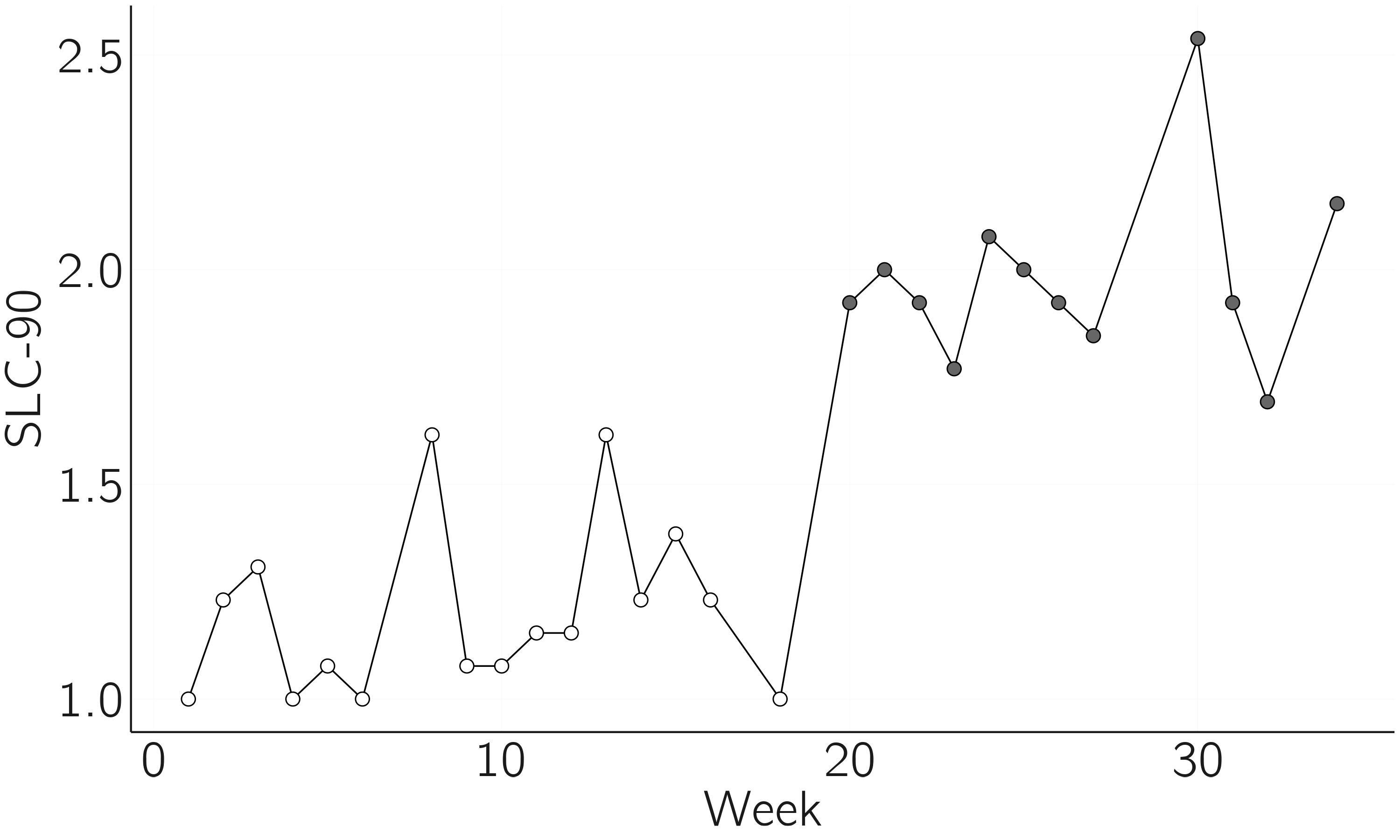

3.5.1.1 Sudden jump

Although the sudden jump is not sufficient (it could be due to an acceleration), demonstrating a sudden jump in time series is useful (also in relation to other flags). Statistical detection of sudden jumps is possible using a number of techniques. Figure 3.19 presents raw weekly measurements of depressive symptoms using the SCL-90-R depression subscale of a patient who gradually stopped antidepressant medication during the study. The participant and researchers were blind to the dose reduction scheme (Wichers, Groot, and Psychosystems 2016). One question was whether this reduction led to a sudden jump to the depressed state. Using a change point detection method (James and Matteson 2014), we found a jump at 18 weeks with a bootstrapped \(p\)-value of .005 (with the null hypothesis of no change point).

Many methods for change point analysis have been developed and compared in Burg and Williams (2022).

The code for this figure is:

layout(t(1)); par(mar = c(4,4,1,1))

x <- read.table('data/PNAS_patient_data.txt', header = TRUE)

library(ecp) # if error: install.packages('ecp')

e1 <- e.divisive(matrix(x$dep, , 1), sig = .01, min.size = 10)

plot(x$week, x$dep, type = 'b', pch = (e1$cluster-1) * 16 + 1, xlab = 'Week',

ylab = 'SLC-90', bty = 'n', main = 'Jump to depression')3.5.1.2 Multimodality

Multimodality (in the case of the cusp bimodality) is an important and easy-to-use flag, as it can be tested with cross-sectional data. Finite mixture models have been developed to test for multimodality in frequency distributions (McLachlan, Lee, and Rathnayake 2019).

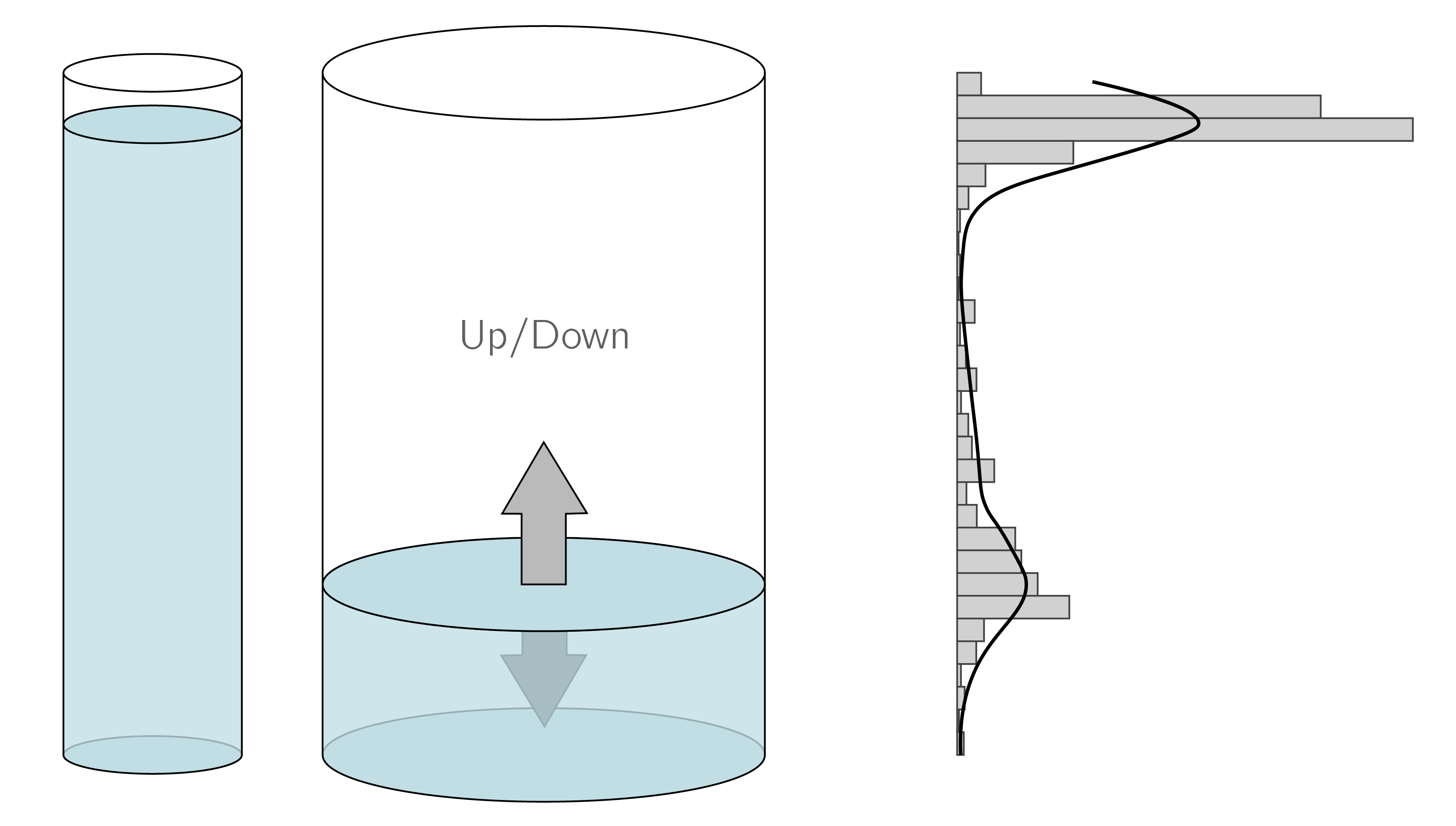

An example is shown in figure 3.20. These data come from a conservation anticipation task, where children have to predict the level of water in the second glass when it is poured over. The resulting data and the fit of a mixture of two normal distributions are shown on the right. The data are clearly bimodal supporting the hypothesis of a transition in conservation learning (van der Maas and Molenaar 1992). These data were used in Dolan and van der Maas (1998) to fit multivariate normal mixture distributions subject to a structural equation model.

The code is:

x <- unlist(read.table('data/conservation_anticipation_item3.txt'))

library(mixtools) # if error: install.packages('mixtools')

result <- normalmixEM(x)

plot(result, whichplot = 2, breaks = 30)There is a whole field in statistics focused on multimodality, mixtures, and clustering. There are some blogs that present overviews of the relevant R packages (Arnaud 2021). Several detailed examples from psychology, using hidden Markov models, are presented in Visser and Speekenbrink (2022).

To capture a sudden jump in a development process, you need a lot of high-frequency data. Sudden shifts in opinion are also rare. But it is easy to collect data on large numbers of people who are asked to make judgments about statements on an issue such as abortion. If these judgments are bimodally distributed, this is consistent with a phase transition. Bimodal data may also be produced by a process of acceleration, with time series consisting mainly of data values before and after the acceleration. So, bimodality is not sufficient. It can be considered necessary, so I always suggest starting with cross-sectional multimodal studies. If they fail, you might reconsider your hypothesis. I have often looked for multimodality in measures of arithmetic learning and never found anything convincing, which made me rethink my hypothesis.

3.5.1.3 Inaccessibility

Inaccessibility means that certain values of the behavioral variable are unstable. The business card is a good example. Given some vertical pressure, we can try what we want but we cannot force the card to stay in the middle position; it is unstable.

In Experiment 2 of Dutilh et al. (2011), we focused on this flag. Our hypothesis was that in simple choice response tasks there is a phase transition between a fast-guessing state and a slower stimulus-driven response state. The idea is that if we force subjects to speed up, there will be a catastrophic decline in performance (from almost 100% correct to 50% correct).

We created a game in which subjects responded to a series of simple choice items (a lexical decision task). The length of the series was not known to the subject. At the end of a series, they were rewarded according to how close their percentage correct was to 75%. Speed was also rewarded, but much less. So, we asked the subject to be in the inaccessible state. The alternative hypothesis, based on information accumulation models, was that there was no phase transition and that responding with 75% accuracy required the correct setting of a boundary (see Section 4.3.1).

It appeared that subjects solved the task by switching between the fast-guessing mode and the slower stimulus-controlled mode, even when instructed according to the alternative model. Thus, the 75% intermediate state appeared to be unstable.

3.5.1.4 Divergence

Divergence or the pitchfork bifurcation, the splitting up of an equilibrium, requires the manipulation of the splitting variable. In the case of attitudes, we hypothesize this to be involvement or some related variable. In van der Maas, Kolstein, and van der Pligt (2003), we reanalyzed a dataset from Stouffer et al. (1949), which Latané and Nowak (1994) presented as evidence for the cusp model. The attitude concerned demobilization (from 0, unfavorable, to 6, favorable), and respondents were asked to indicate how strongly they felt about their answer (from intensity 0 to intensity 5). For low intensities of feeling, the data are normally distributed whereas for higher intensities, data are bimodally distributed (see figure 3.21).

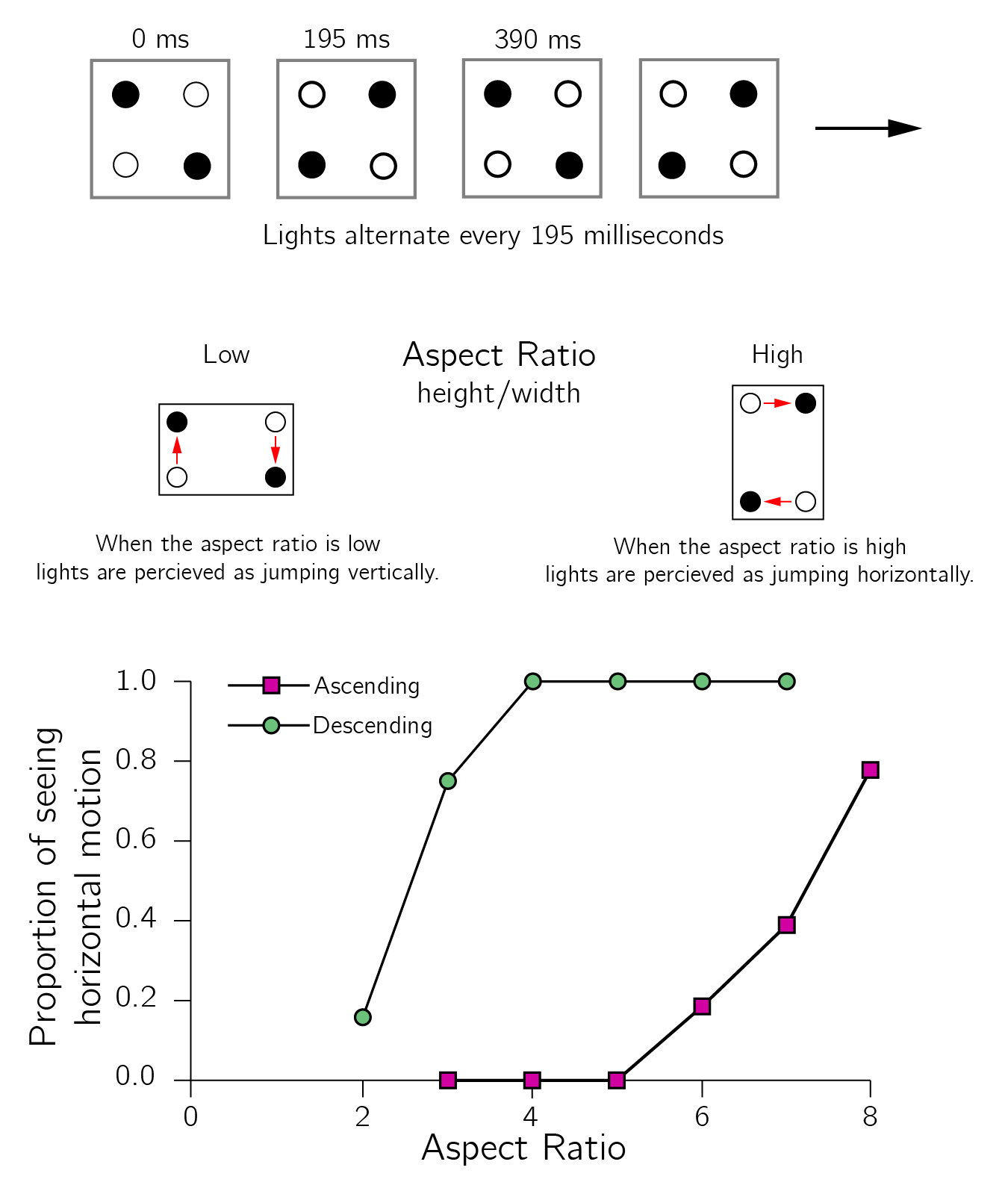

3.5.1.5 Hysteresis

To test for hysteresis, we need to slowly increase and decrease the normal variable and test whether sudden jumps occur with a delay. We have demonstrated hysteresis in proportional reasoning using Piaget’s balance scale test in which a specific dimension (distance from the fulcrum) was systematically varied (Jansen and van der Maas 2001). We also hypothesized that speeding up subjects in response time tasks would eventually lead to a catastrophe in accuracy. To support this claim, we demonstrated bimodality in response times and hysteresis in the speed-accuracy trade-off (Dutilh et al. 2011). To support the cusp model of multistable perception, we used the quartet motion paradigm (Ploeger, van der Maas, and Hartelman 2002). In this perceptual paradigm two lights are presented simultaneously, first a pair from two of the diagonally opposite corners of the rectangle, and then a second pair from the other two diagonally opposite corners of the rectangle. Usually, either vertical or horizontal apparent motion is perceived. By gradually increasing or decreasing the aspect ratio (i.e., the ratio of height to width of the quartet), hysteresis in the jumps between the two percepts was demonstrated (see figure 3.22).

In Ploeger, van der Maas, and Hartelman (2002), we used a special design, the method of modified limits, to rule out the alternative explanation that hysteresis is simply due to delayed responses. It could be that the switches always occur in the middle (at an aspect ratio of 1), but the self-report is delayed. In the modified limits method, subjects do not respond during a trial, only after the entire trial. By varying the length of the trials, it is possible to determine at which parameter value the subject perceives a switch.

3.5.1.6 Anomalous variance, divergence of linear response, and critical slowing down

Gilmore’s last three flags—anomalous variance, divergence of linear response, and critical slowing down—are indicators that occur near the bifurcation lines. They are also known as early warning signals and are a popular topic of research (Dakos et al. 2012).

Anomalous variance occurs because near a bifurcation point the second derivative diminishes, meaning that the minimum becomes less deep. Assuming there is always some perturbation of the state, this will lead to larger fluctuations in the state.

Divergence of linear response is the size of the effect of a small perturbation of the state, which will be greater near a bifurcation point. It will also take longer to return to equilibrium. This delay in return time is known as critical slowing down and is also studied in other approaches to nonlinear dynamical systems (e.g., synergetics, Haken 1977). Examples of applications in psychology can be found in Leemput et al. (2014) and Olthof et al. (2020). A somewhat critical review is provided in Dablander et al. (2023).

In my experience, the problem with early warning signals is that both type 1 and type 2 errors should be low for predicting transitions. This is challenging even in simulations, let alone in noisy psychological data. It would be fantastic if these early warnings really worked. For example, being able to predict a relapse into depression or addiction would be of great clinical value.

Together, the catastrophe flags provide a methodology for phase transition research in psychology. A single flag may not be sufficient, but the combination is. For example, the combination of evidence for inaccessibility and hysteresis is convincing. I have given psychological examples of most of the flags. Which flags to use in a particular case depends on the knowledge and experimental control of the control variables. Another approach is to fit the cusp model directly to the data. This is the subject of the next section.

3.5.2 Fitting the cusp to cross-sectional data

3.5.2.1 Cobb’s maximum likelihood approach

In a series of papers, Loren Cobb and colleagues (Cobb and Zacks 1985; Cobb 1978) developed a maximum likelihood approach9 to fit the cusp catastrophe to data consisting of cross-sectional measurements of \(X\), \(a\), and \(b\). We have implemented this approach in a cusp R package described in Grasman, van der Maas, and Wagenmakers (2009).

The basic idea is to make catastrophe theory, a deterministic theory, stochastic by adding a stochastic term, called Wiener noise (with variance \(\sigma^{2})\), to equation 3.210:

\[ dX = - V^{'}(X)dt + \sigma dW(t). \tag{3.9}\]

It is important to note that this type of stochasticity is not the same as measurement noise. Measurement noise—that is, \(\varepsilon\) in \(Y = X + \varepsilon\)—does not affect the dynamics of \(X\). Wiener noise does; it is part of the updating equation of \(X\) itself. This stochastic differential equation is associated with a probability distribution of the form:

\[ f(X) = \frac{1}{{Z\sigma}^{2}}e^{\frac{- V(X)}{\sigma^{2}}}, \tag{3.10}\]

where \(Z\) is a normalizing constant11 necessary to ensure that the area under \(f(X)\) is 1. This may look complicated, but for the quadratic case \(V(X) = {\frac{1}{2}X}^{2}\), this results in the standard normal distribution, with \(Z = {\sqrt{2\pi}}/{\sigma}\).

As in the case of the normal distribution, we want to allow for some transformations of the variables. To simplify the necessary statistical notation, we write the cusp as \(V(y) = {- \alpha y - \frac{1}{2}\beta y^{2} + \frac{1}{4}y}^{4}\). The probability distribution for the cusp is:

\[ f(y) = \frac{1}{{Z\sigma}^{2}}e^{\frac{\alpha y + \frac{1}{2}\beta y^{2} - \frac{1}{4}y^{4}}{\sigma^{2}}}. \tag{3.11}\]

As in regression models, the cusp variables are modeled as linear function of measured variables. That is, the dependent variables \(Y_{i1},\ Y_{i2},\ldots,Y_{ip}\) and the independent variables \(X_{i1},\ X_{i2},\ldots,X_{iq}\), for subjects \(i = 1,\ldots,n\), are related to the cusp variables as follows:

\[ \begin{gathered} y_{i} = w_{0} + w_{1}Y_{i1} + w_{2}Y_{i2} + \ldots + w_{p}Y_{ip}, \\ \alpha_{i} = a_{0} + a_{1}X_{i1} + a_{2}X_{i2} + \ldots + a_{q}X_{iq}, \\ \beta_{i} = b_{0} + b_{1}X_{i1} + b_{2}X_{i2} + \ldots + b_{q}X_{iq}. \end{gathered} \tag{3.12}\]

By estimating these regression parameters, we fit the cusp model to empirical data. The cusp package in R makes this possible. I will first demonstrate this using simulated data. I strongly recommend this approach. First, it forces you to understand what the statistical technique actually does, and second, it gives you a way to test the power and investigate violations of the technique’s assumptions.

library(cusp) # if error: install.packages('cusp')

set.seed(1)

X1 <- runif(1000) # independent variable 1

X2 <- runif(1000) # independent variable 2

# to be estimated parameters:

w0 <- 2; w1 <- 4; a0 <- -2; a1 <- 3; b0 <- -2; b1 <- 4

# sample Y1 according to cusp using rcusp and the parameter values:

Y1 <- -w0/w1 + (1/w1) * Vectorize(rcusp)(1, a0 + a1 * X1, b0 + b1 * X2)

data <- data.frame(X1, X2, Y1) # collect ‘measured’ variables in dataI recommend doing some descriptive analysis first. With hist(data$Y1) we can inspect whether there is some indication of bimodality. \(X2\) is the splitting variable, so perhaps we see stronger bimodality with hist(data$Y1[data$X2>mean(data$X2)]). The function pairs in R, pairs(data), is also always recommended. In this perfect simulated case, you will already see strong indications of the cusp. Now we fit the full model with \(\alpha\) and \(\beta\) both as function of \(X1\) and \(X2\).

fit <- cusp(y ~ Y1, alpha ~ X1+X2, beta ~ X1+X2, data)

summary(fit) The table provides a summary:

| Coefficients | Estimate | Std. Error | z-value | Pr(>|z|) |

|---|---|---|---|---|

| a[Intercept] | -2.13 | 0.19 | -11.0 | < 2e-16*** |

| a[X1] | 3.11 | 0.22 | 14.2 | < 2e-16*** |

| a[X2] | 0.15 | 0.17 | 0.9 | 0.39 |

| b[Intercept] | -2.29 | 0.34 | -6.7 | 2.66e-11*** |

| b[X1] | -0.09 | 0.33 | -0.3 | 0.79 |

| b[X2] | 4.40 | 0.27 | 16.5 | < 2e-16*** |

| w[Intercept] | 1.98 | 0.07 | 27.6 | < 2e-16*** |

| w[Y1] | 3.97 | 0.10 | 38.0 | < 2e-16*** |

Note that we fit a model with too many parameters. We also estimated \(a_{2}\) and \(b_{1}\) (because the model was specified as alpha ~ X1+X2, beta ~ X1+X2). These estimates are not significantly different from 0. The other parameters are estimated reasonably close to their true values, since the true values fall within the confidence interval of the estimates (defined by twice the standard error on either side). We expect a better fit in terms of AIC and BIC when we fit a reduced model without \(a_{2}\) and \(b_{1}\). These fit indices penalize the goodness of fit (e.g., the log-likelihood) for the number of parameters used to discourage overfitting and to promote model parsimony.

fit_correct_model <- cusp(y ~ Y1, alpha ~ X1, beta ~ X2, data)

summary(fit_correct_model)| R.Squared | logLik | npar | AIC | AICc | BIC | |

|---|---|---|---|---|---|---|

| Full model | 0.428 | -1058.7 | 8 | 2133.3 | 2133.5 | 2172.6 |

| Reduced model | 0.426 | -1059.0 | 6 | 2130.0 | 2130.1 | 2159.5 |

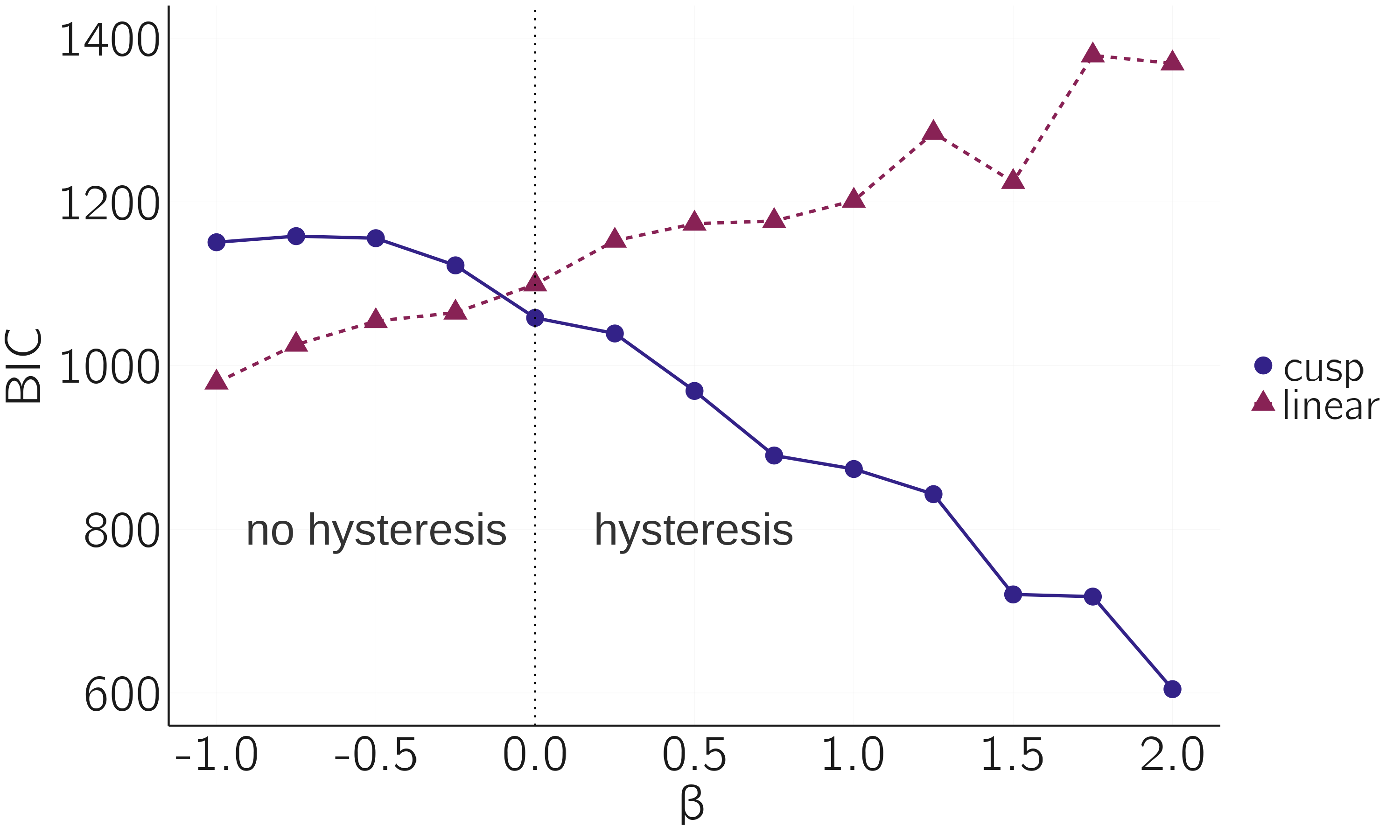

The next simulation demonstrates that we can detect hysteresis using this approach. We simulate data with \(- 2 < \alpha < 2,\) and fixed \(\beta\). If \(\beta < 0\) we have no hysteresis, but if \(\beta > 0\), we do have hysteresis. With the code below we simulate datasets for different \(\beta\) and compare the goodness of fit between the linear and cusp model. Figure 3.23 summarizes the results. Note that a lower BIC indicated the better-fitting model.

set.seed(10)

n <- 500

X1 <- seq(-1, 1, le = n) # independent variable 1

a0 <- 0; a1 <- 2; b0 <- 2 # to be estimated parameters

b0s <- seq(-1, 2, by = .25)

i <- 0

dat <- matrix(0, length(b0s), 7)

for (b0 in b0s){

i <- i + 1

Y1 <- Vectorize(rcusp)(1, a1 * X1, b0)

data <- data.frame(X1, Y1) # collect ‘measured’ variables in data

fit <- cusp(y ~ Y1, alpha ~ X1, beta ~ 1, data)

sf <- summary(fit)

dat[i, ] <- c(b0, sf$r2lin.r.squared[1], sf$r2cusp.r.squared[1],

sf$r2lin.bic[1], sf$r2cusp.bic[1],

sf$r2lin.aic[1], sf$r2cusp.aic[1])

}

par(mar = c(4,5,1,1))

matplot(dat[,1], dat[,4:5], ylab = 'Bic', xlab = 'b0', bty = 'n', type = 'b',

pch = 1:2, cex.lab = 1.5)

legend('right', legend = c('linear','cusp'), lty = 1:2, pch = 1:2,

col = 1:2, cex = 1.5)

abline(v = 0, lty = 3)

text(-.5, 800, 'no hysteresis', cex = 1.5)

text(.5, 800, 'hysteresis', cex = 1.5)

3.5.2.2 Empirical examples

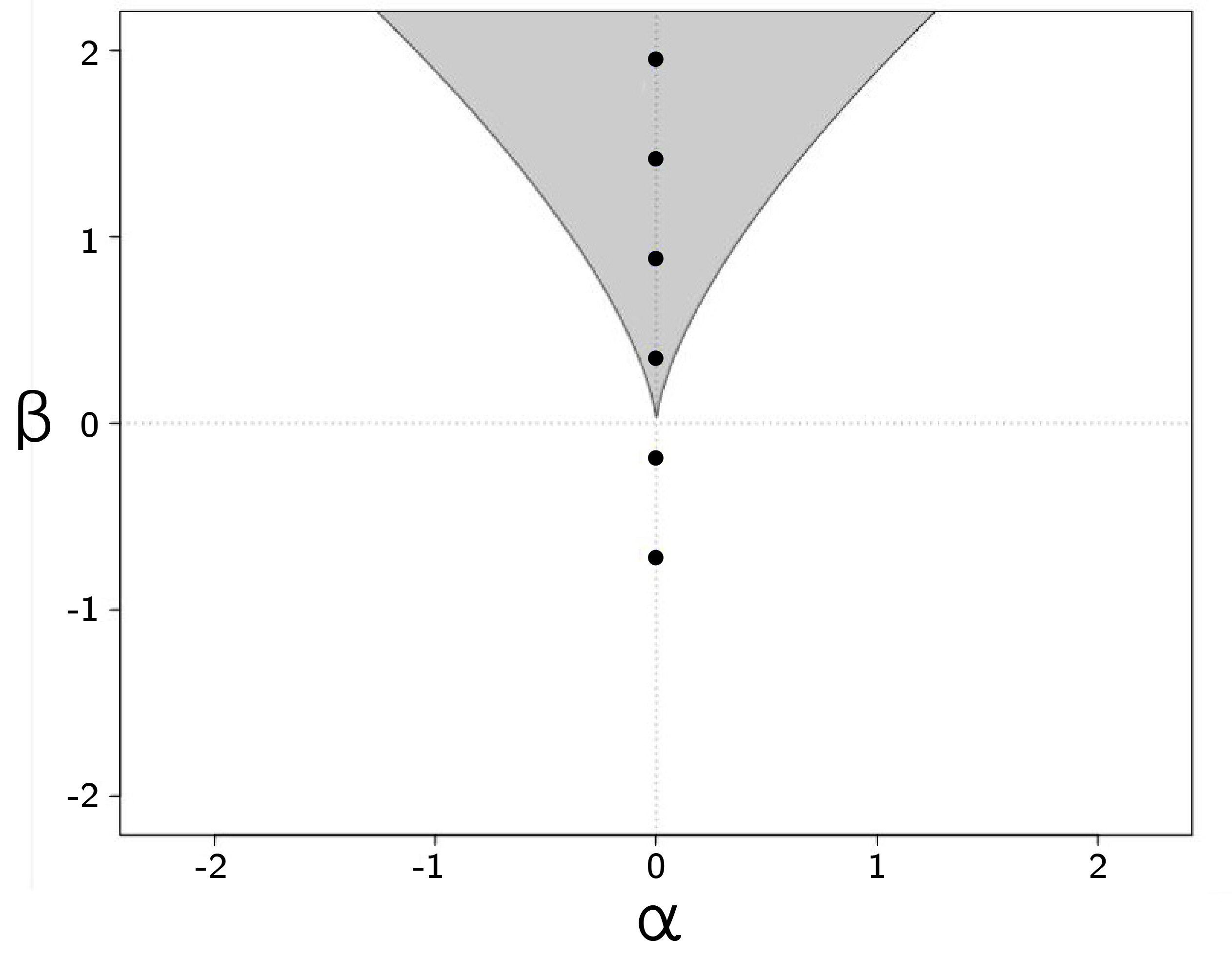

In Grasman, van der Maas, and Wagenmakers (2009), we present several examples with real data. As another example, we use Stoufer’s data, which we used as an example of divergence before (see figure 3.21).

x <- read.table('data/stoufer.txt')

colnames(x) <- c('IntensityofFeeling', 'Attitude')

fit <- cusp(y ~ Attitude, alpha ~ IntensityofFeeling,

beta ~ IntensityofFeeling, x)

summary(fit)Inspection of the parameter estimates shows that, as expected, intensity of feeling only loads on the splitting axis and not on the normal axis. Figure 3.24 shows the location of the data in the bifurcation set (plot(fit)).

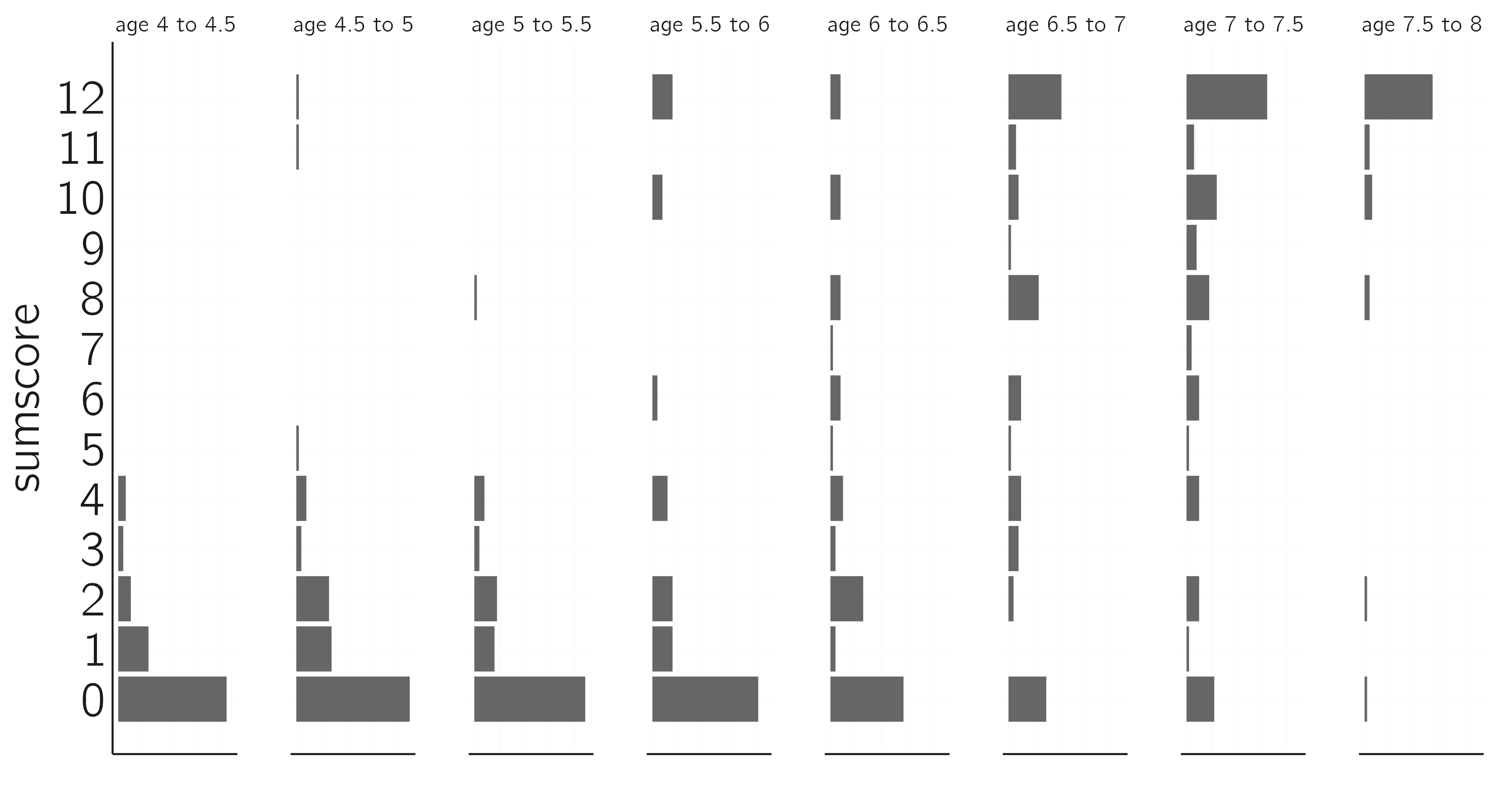

Another example is the conservation dataset of Bentler (1970), which contains the scores on a 12-item test from a conservation test of 560 children from eight different age groups (figure 3.25). These data are expected to be bimodal and to move along the normal axis (van der Maas and Molenaar 1992).

x <- read.table('data/bentler.txt', header = TRUE)

layout(t(1:8))

age <- c('age 4 to 4.5','age 4.5 to 5','age 5 to 5.5','age 5.5 to 6',

'age 6 to 6.5','age 6.5 to 7','age 7 to 7.5','age 7.5 to 8')

for(i in 1:8){

if(i == 1) {par(mar = c(4,3,2,1)); names = 0:12} else

{names = ''; par(mar = c(4,1,2,1))}

barplot(table(factor(x[x[,1] == i,2], levels = 0:12)),

horiz = TRUE, axes = FALSE,

main = age[i], xlab = '',

names = names, cex.main = 1.5, cex.names = 1.5)

}

fit <- cusp(y ~ score, alpha ~ age_range, beta ~ age_range, x)

summary(fit)

plot(fit)This is supported by results of the cusp fit. You can verify that a model with beta ~ 1 fits better according to the AIC and BIC.

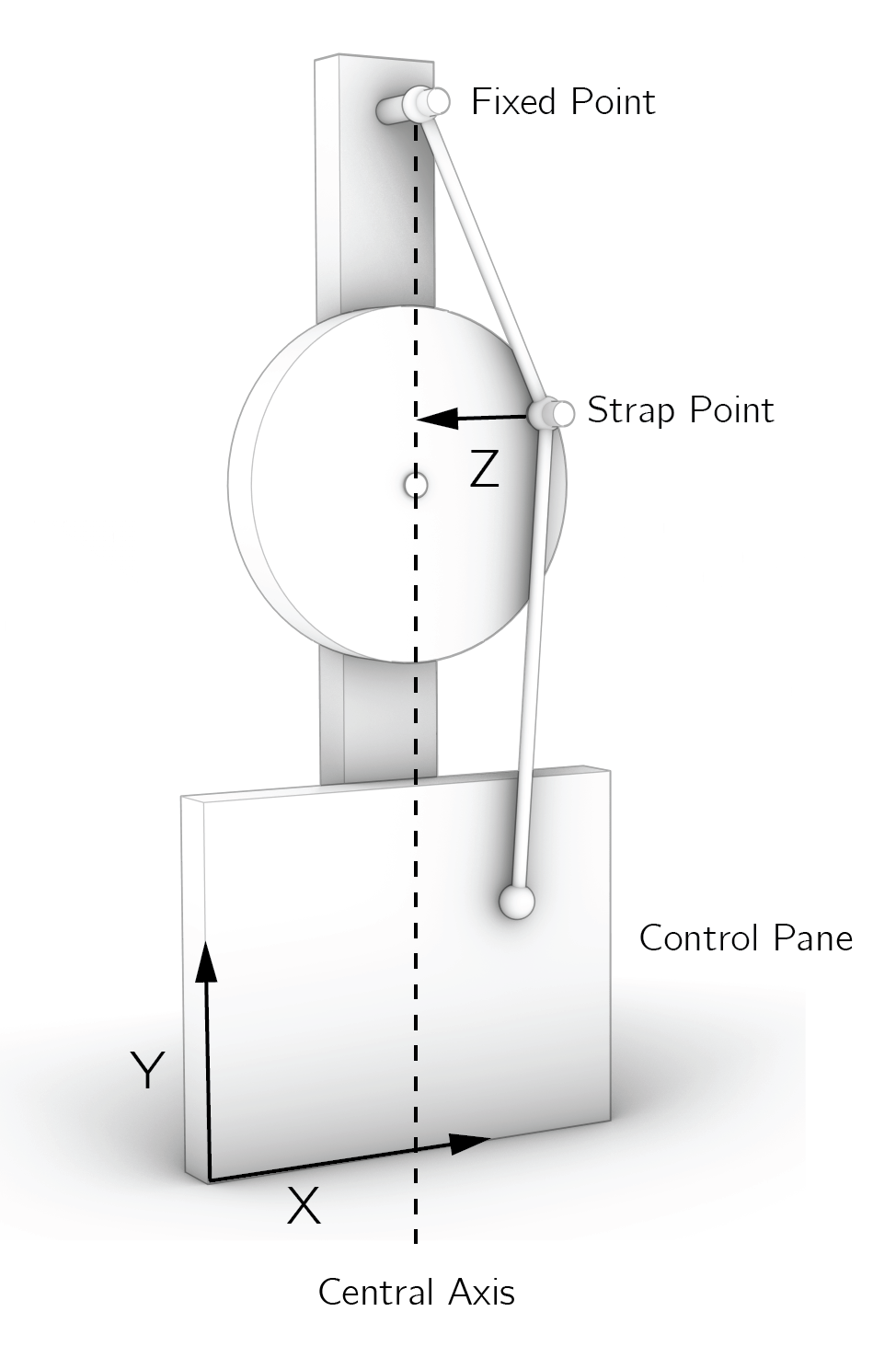

A great exercise we have often used in the classroom is to build a Zeeman machine, collect data with it, and fit the cusp model to the data (see Grasman, van der Maas, and Wagenmakers 2009 for details). Zeeman invented this machine to demonstrate the properties of the cusp. Our students were rewarded for the quality of the model and the artistic value of their Zeeman machine (figure 3.26).

3.5.2.3 Evaluation

A few final remarks: First, Cobb’s method can be used with cross-sectional data. Data points should be independent. To test for hysteresis in time series, other approaches are required. One option is to use hidden Markov models as in Dutilh et al. (2011).

Second, there are some issues with Cobb’s approach that are due to fundamental differences between probability distributions and potential functions. The latter can be transformed in many ways (so-called local diffeomorphisms) without changing the qualitative properties of the cusp. With the added constraint on probability distributions (area = 1), the same transformations can lead to qualitative effects, such as a change in the number of modes. Wagenmakers et al. (2005) suggest a solution to this problem for time series.

Third, two alternative approaches have been proposed. Both Guastello’s (1982) change score least square regression approach and the Gemcat approach (1987) use the first derivative of the cusp as point of departure. A problem with both approaches is that they do not distinguish between stable and unstable equilibrium states. Data points in the inaccessible region improve the fit of the model, whereas they should decrease the fit. Alexander et al. (1992) provide a detailed critique.

3.6 Criticism of catastrophe theory

Rosser (2007) speaks of the rise and the fall of catastrophe theory. The hype following the publication of Zeeman (1976) in Scientific American 12, in which he introduced the phenomenological application of catastrophe theory in the behavioral and social sciences, led to a strongly worded reply by Zahler and Sussmann (1977) in Nature.

Because people still refer to this paper when we use catastrophe theory in our work, I will briefly respond to Zahler and Sussmann’s main points of criticism. In their introduction, they state that there may be legitimate uses of catastrophe theory in physics and engineering.

They raise 10 points, some of which I have already addressed. For example, their first point is about how sudden a jump actually is, but they call this a less serious criticism. As I explained earlier, it is not the suddenness that matters but whether or not the intermediate states are unstable.

A number of points are about inferring a cusp from data, which was indeed done rather superficially in Zeeman’s earlier work. They point out that there are no testable predictions, that the location of the cusp can be shifted, and that there is no way to decide whether the data fit the cusp. I hope to have shown that these problems are largely solved: the catastrophe flags allow us to make new testable predictions, and with Cobb’s maximum likelihood approach we can fit the model as we would do with any other statistical model in modern science. Of course, one can be critical of the use of statistics in psychology and the social sciences, but these criticisms are not specific to catastrophe theory.

Another, somewhat inconsistent, line of criticism is that many catastrophe models in psychology and the social sciences are just wrong and inconsistent with the data (which could be true), while also not falsifiable. But you cannot have it both ways: if it is wrong or inconsistent with the data, it is falsifiable. Nevertheless, I agree that it is important to think about falsifiability. Theories in psychology tend to be moving targets. As soon as someone finds an empirical result that contradicts the theory, the theory is quickly modified.

Then Zahler and Sussmann point out that catastrophe theorists often try to make a discrete variable into a continuous one. Their example is aggression, which they believe is inherently discrete. They call Zeeman’s interpretation of aggression as a continuous family of behaviors absurd and utterly meaningless. This may be a bit strong. We can think of situations in which aggression can vary from mild to severe, or from verbal to physical, directed at a person’s belongings, mild physical directed at the person, to severe physical. A rich ordering of aggressive acts is very useful for describing domestic violence. Sometimes the change along these acts or variants is gradual, and other times sudden. Whether such an ordering can be treated as a quantitative continuum is one of the most difficult questions in our field (Borsboom et al. 2016; Michell 2008).

Zahler and Sussmann’s final point is that there are better alternatives, such as quantum mechanics, discrete mathematics, and bifurcation theory. There is work on quantum mechanics in psychology (especially in the context of consciousness), but whether it will lead to breakthroughs in this field remains to be seen. Discrete mathematics may be an alternative in some cases (e.g., to model symbolic thinking). I see catastrophe theory as a special branch of bifurcation theory, especially useful when the system under study is difficult to describe in terms of mathematical equations. This goes back to the distinction between phenomenological and mechanistic models. I think we should put more effort into developing mechanistic models based on first principles. More on this in the next chapters.

Loehle (1989) presents an excellent discussion on the usefulness of catastrophe theory in the context of modeling ecosystems. He concludes that “an unresolved problem in applying catastrophe models is that of testing the goodness of fit of the model to data,” but this problem has now been largely solved.

3.7 Conclusion

Psychologists are often concerned with psychological types and classes, stages and phases, and the transitions between them. Our thinking about transitions becomes much clearer and more advanced when we know the basics of bifurcations.

Catastrophe theory comes with a toolbox for the behavioral and social sciences. We can build phenomenological models, test for catastrophe flags, and even fit cusp models to data. With the development of this toolbox, most of the criticisms of catastrophe theory lose their relevance.

However, there is room for improvement. Phenomenological models have limited explanatory power. As explained in the next chapter on dynamical system models, it is possible to create more mechanistic models that support the use of phenomenological models. In Chapter 6, Section 6.3.3, another option is introduced using networks. I will demonstrate that the behavior of the Ising network model for attitudes is governed by the cusp model, which is very similar to the cusp model proposed for the attitude toward abortion.

3.8 Exercises

The equilibria of the fold are \(X = \pm \sqrt{\frac{a}{3}}\). This can be checked by setting the first derivative to 0. Show this. (*)

In Zeeman’s dog aggression model, fear and rage are “rotated” control variables. How can we translate this to a model with unrotated axes? Provide the equations that specify the normal and splitting axis as function of fear and rage. (*)

Derive the equation for the bifurcation lines of the cusp (\(27a^{2} = 4b^{3})\), by setting the first and second derivatives to 0. Plot the bifurcation lines in GeoGebra or Desmos. (**)

Some insight into the butterfly catastrophe \(V(X) = {- aX - bX^{2} - cX}^{3} - dX^{4} + X^{6}\) can be gained by entering the equation in free online graphing calculators such as Desmos or GeoGebra. Set \(a\), \(b\), \(c\), \(d\) to 0, -5, 0, 5. Then start varying \(a\) and \(c\). What is the difference in the effect of these two parameters on the appearance and disappearance of attractors? (**)

Set up a phenomenological cusp for falling in love. Follow my guidelines (see Section 3.4.2). (**)

Check whether indeed the Bentler data fit better when

age_rangeonly loads on the normal axis (according to the AIC and BIC). What is the correct specification ofbetaincusp()in this case? (*)What is the best fitting cusp model (according to the BIC) for this tricky dataset created with this R code? Why? (**)

n <- 500 z <- Vectorize(rcusp)(1, .7 * rnorm(n), 2 + 2 * rnorm(n)) # sample z x <- rnorm(n) y <- rnorm(n) data <- data.frame(z, x, y) # collect variables in dataBuild a Zeeman machine, collect data, and fit the cusp (see Example III of Grasman, van der Maas, and Wagenmakers 2009). What is your best fitting model? Provide a plot of the data in the bifurcation set and a picture of your Zeeman machine. (**)

Learning a particular conservation task does seem to be rather sudden, but there could easily be two years between learning conservation of number and conservation of volume (Kreitler and Kreitler 1989). This is inconsistent with the stage theory.↩︎

A related and very similar distinction is that between a subcritical bifurcation and a supercritical bifurcation.↩︎

As we will see in Section 3.5.2.1, the statistical equivalent of the quadratic potential function is the normal distribution, the most popular distribution in our statistical work.↩︎

The cubic equation cannot be solved easily. This is due to the fact that the cusp is not a function of the form \(y = f(x)\). Functions assign to each element of \(x\) exactly one element of \(y\). But in bistable systems we assign two values of \(y\) to one value of \(x\).↩︎

Perhaps better to call this view anti-choice.↩︎

I note that the butterfly catastrophe and the butterfly effect in chaos theory are completely unrelated concepts.↩︎

Given these guidelines and examples, it is an interesting exercise to develop one’s own cusp model, for example, for falling in love. This is a tricky exercise.↩︎

The is method finds the parameter values that make the observed data most probable.↩︎

Many different notations exist for this. Perhaps clearer is \(dX(t) = - V^{'}\left( X(t) \right)dt + \sigma dW(t)\), as both \(dX\) and \(dW\) depend on time.↩︎

For consistency with later chapters, I define \(Z\) differently from the notation in Grasman, van der Maas, and Wagenmakers (2009). It is the inverse of \(Z\) in that paper.↩︎

To see Zeeman at work, I recommend the BBC documentary Case Study Catastrophe Theory Maths Foundation Course (https://www.youtube.com/watch?v=myDvcvox1V4&t=1435s).↩︎